guide:0922bf5a1a: Difference between revisions

No edit summary |

mNo edit summary |

||

| Line 1: | Line 1: | ||

'''Cluster analysis''' or '''clustering''' is the task of grouping a set of objects in such a way that objects in the same group (called a '''cluster''') are more similar (in some sense) to each other than to those in other groups (clusters). It is a main task of [[wikipedia:exploratory data analysis|exploratory data analysis]], and a common technique for [[wikipedia:statistics|statistical]] [[wikipedia:data analysis|data analysis]], used in many fields, including [[wikipedia:pattern recognition|pattern recognition]], [[wikipedia:image analysis|image analysis]], [[wikipedia:information retrieval|information retrieval]], [[wikipedia:bioinformatics|bioinformatics]], [[wikipedia:data compression|data compression]], [[wikipedia:computer graphics|computer graphics]] and [[wikipedia:machine learning|machine learning]]. | |||

Cluster analysis itself is not one specific [[wikipedia:algorithm|algorithm]], but the general task to be solved. It can be achieved by various algorithms that differ significantly in their understanding of what constitutes a cluster and how to efficiently find them. Popular notions of clusters include groups with small [[wikipedia:Distance function|distances]] between cluster members, dense areas of the data space, intervals or particular [[wikipedia:statistical distribution|statistical distribution]]s. Clustering can therefore be formulated as a [[wikipedia:multi-objective optimization|multi-objective optimization]] problem. The appropriate clustering algorithm and parameter settings (including parameters such as the [[wikipedia:Metric (mathematics)|distance function]] to use, a density threshold or the number of expected clusters) depend on the individual [[wikipedia:data set|data set]] and intended use of the results. Cluster analysis as such is not an automatic task, but an iterative process of [[wikipedia:knowledge discovery|knowledge discovery]] or interactive multi-objective optimization that involves trial and failure. It is often necessary to modify [[wikipedia:data preprocessing|data preprocessing]] and model parameters until the result achieves the desired properties. | |||

== Definition == | |||

The notion of a "cluster" cannot be precisely defined, which is one of the reasons why there are so many clustering algorithms.<ref name="estivill">{{cite journal | title=Why so many clustering algorithms – A Position Paper | last = Estivill-Castro | first = Vladimir | s2cid = 7329935 | journal=ACM SIGKDD Explorations Newsletter |date=20 June 2002 | volume= 4 | issue=1 | pages=65–75 | doi=10.1145/568574.568575}}</ref> There is a common denominator: a group of data objects. However, different researchers employ different cluster models, and for each of these cluster models again different algorithms can be given. The notion of a cluster, as found by different algorithms, varies significantly in its properties. Understanding these "cluster models" is key to understanding the differences between the various algorithms. Typical cluster models include: | |||

{| class="table" | |||

|- | |||

! Cluster Model !! Description/Example | |||

|- | |||

| Connectivity models || For example, [[wikipedia:hierarchical clustering|hierarchical clustering]] builds models based on distance connectivity | |||

|- | |||

| Centroid models || For example, the [[wikipedia:k-means algorithm|k-means algorithm]] represents each cluster by a single mean vector | |||

|- | |||

| Distributional models || Clusters are modeled using statistical distributions, such as [[wikipedia:multivariate normal distribution|multivariate normal distribution]]s used by the [[wikipedia:expectation-maximization algorithm|expectation-maximization algorithm]] | |||

|- | |||

| Density models || For example, [[wikipedia:DBSCAN|DBSCAN]] and [[wikipedia:OPTICS|OPTICS]] defines clusters as connected dense regions in the data space | |||

|- | |||

| Subspace models|| In [[wikipedia:biclustering|biclustering]] (also known as co-clustering or two-mode-clustering), clusters are modeled with both cluster members and relevant attributes. | |||

|- | |||

| Group models || Some algorithms do not provide a refined model for their results and just provide the grouping information. | |||

|- | |||

| Graph-based models || A [[wikipedia:Clique (graph theory)|clique]], that is, a subset of nodes in a [[wikipedia:Graph (discrete mathematics)|graph]] such that every two nodes in the subset are connected by an edge can be considered as a prototypical form of cluster. Relaxations of the complete connectivity requirement (a fraction of the edges can be missing) are known as quasi-cliques, as in the [[wikipedia:HCS clustering algorithm|HCS clustering algorithm]]. | |||

|- | |||

| Signed graph models || Every [[wikipedia:path (graph theory)|path]] in a [[wikipedia:signed graph|signed graph]] has a [[wikipedia:sign (mathematics)|sign]] from the product of the signs on the edges. Under the assumptions of [[wikipedia:balance theory|balance theory]], edges may change sign and result in a bifurcated graph. The weaker "clusterability axiom" (no [[wikipedia:cycle (graph theory)|cycle]] has exactly one negative edge) yields results with more than two clusters, or subgraphs with only positive edges.<ref>[[wikipedia:James A. Davis|James A. Davis]] (May 1967) "Clustering and structural balance in graphs", [[wikipedia:Human Relations|Human Relations]] 20:181–7</ref> | |||

|- | |||

|Neural models || The most well known [[wikipedia:unsupervised learning|unsupervised]] [[wikipedia:neural network|neural network]] is the [[wikipedia:self-organizing map|self-organizing map]] and these models can usually be characterized as similar to one or more of the above models, and including subspace models when neural networks implement a form of [[wikipedia:Principal Component Analysis|Principal Component Analysis]] or [[wikipedia:Independent Component Analysis|Independent Component Analysis]] | |||

|} | |||

A "clustering" is essentially a set of such clusters, usually containing all objects in the data set. Additionally, it may specify the relationship of the clusters to each other, for example, a hierarchy of clusters embedded in each other. Clusterings can be roughly distinguished as: | |||

{| class="table" | |||

|- | |||

! Type !! Description | |||

|- | |||

| Hard clustering || Each object belongs to a cluster or not | |||

|- | |||

| Soft clustering || Each object belongs to each cluster to a certain degree (for example, a likelihood of belonging to the cluster) | |||

|} | |||

There are also finer distinctions possible, for example: | |||

{| class="table" | |||

|- | |||

! Type !! Description | |||

|- | |||

| Strict partitioning clustering || Each object belongs to exactly one cluster | |||

|- | |||

| Strict partitioning clustering with outliers || Objects can also belong to no cluster, and are considered [[wikipedia:Anomaly detection|outliers]] | |||

|- | |||

| Overlapping clustering (also: ''alternative clustering'', ''multi-view clustering'') || Objects may belong to more than one cluster; usually involving hard clusters | |||

|- | |||

| Hierarchical clustering || Objects that belong to a child cluster also belong to the parent cluster | |||

|- | |||

|[[wikipedia:Subspace clustering|Subspace clustering]] || While an overlapping clustering, within a uniquely defined subspace, clusters are not expected to overlap | |||

|} | |||

== Algorithms == | |||

As listed above, clustering algorithms can be categorized based on their cluster model. The following overview will only list the most prominent examples of clustering algorithms, as there are possibly over 100 published clustering algorithms. Not all provide models for their clusters and can thus not easily be categorized. | |||

There is no objectively "correct" clustering algorithm, but as it was noted, "clustering is in the eye of the beholder."<ref name="estivill" /> The most appropriate clustering algorithm for a particular problem often needs to be chosen experimentally, unless there is a mathematical reason to prefer one cluster model over another. An algorithm that is designed for one kind of model will generally fail on a data set that contains a radically different kind of model.<ref name="estivill" /> For example, k-means cannot find non-convex clusters.<ref name="estivill" /> | |||

=== Connectivity-based clustering (hierarchical clustering) === | |||

Connectivity-based clustering, also known as ''[[wikipedia:hierarchical clustering|hierarchical clustering]]'', is based on the core idea of objects being more related to nearby objects than to objects farther away. These algorithms connect "objects" to form "clusters" based on their distance. A cluster can be described largely by the maximum distance needed to connect parts of the cluster. At different distances, different clusters will form, which can be represented using a [[wikipedia:dendrogram|dendrogram]], which explains where the common name "[[wikipedia:hierarchical clustering|hierarchical clustering]]" comes from: these algorithms do not provide a single partitioning of the data set, but instead provide an extensive hierarchy of clusters that merge with each other at certain distances. In a dendrogram, the y-axis marks the distance at which the clusters merge, while the objects are placed along the x-axis such that the clusters don't mix. | |||

Connectivity-based clustering is a whole family of methods that differ by the way distances are computed. Apart from the usual choice of [[wikipedia:distance function|distance function]]s, the user also needs to decide on the linkage criterion (since a cluster consists of multiple objects, there are multiple candidates to compute the distance) to use. Popular choices are known as [[wikipedia:single-linkage clustering|single-linkage clustering]] (the minimum of object distances), [[wikipedia:complete linkage clustering|complete linkage clustering]] (the maximum of object distances), and [[wikipedia:UPGMA|UPGMA]] or [[wikipedia:WPGMA|WPGMA]] ("Unweighted or Weighted Pair Group Method with Arithmetic Mean", also known as average linkage clustering). Furthermore, hierarchical clustering can be agglomerative (starting with single elements and aggregating them into clusters) or divisive (starting with the complete data set and dividing it into partitions). | |||

These methods will not produce a unique partitioning of the data set, but a hierarchy from which the user still needs to choose appropriate clusters. They are not very robust towards outliers, which will either show up as additional clusters or even cause other clusters to merge (known as "chaining phenomenon", in particular with [[wikipedia:single-linkage clustering|single-linkage clustering]]). In the general case, the complexity is <math>\mathcal{O}(n^3)</math> for agglomerative clustering and <math>\mathcal{O}(2^{n-1})</math> for [[wikipedia:divisive clustering|divisive clustering]],<ref>{{cite book | last = Everitt | first = Brian | title = Cluster analysis | publisher = Wiley | location = Chichester, West Sussex, U.K | year = 2011 | isbn = 9780470749913 }}</ref> which makes them too slow for large data sets. For some special cases, optimal efficient methods (of complexity <math>\mathcal{O}(n^2)</math>) are known: SLINK<ref>{{cite journal | first = R. | last = Sibson | title=SLINK: an optimally efficient algorithm for the single-link cluster method | journal=The Computer Journal | volume=16 | issue=1 | pages=30–34 | year=1973 | publisher=British Computer Society | url=http://www.cs.gsu.edu/~wkim/index_files/papers/sibson.pdf | doi=10.1093/comjnl/16.1.30 }}</ref> for single-linkage and CLINK<ref>{{cite journal | first = D. | last = Defays | title=An efficient algorithm for a complete link method | journal=The Computer Journal | volume=20 | issue=4 | pages=364–366 | year=1977 | publisher=British Computer Society | doi=10.1093/comjnl/20.4.364}}</ref> for complete-linkage clustering. | |||

<gallery caption="Linkage clustering examples" widths="300px" heights="300px" class="text-center"> | |||

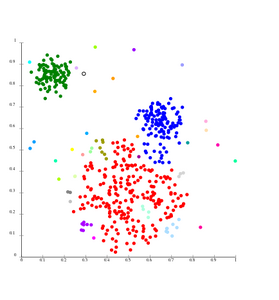

File:SLINK-Gaussian-data.svg|Single-linkage on Gaussian data. At 35 clusters, the biggest cluster starts fragmenting into smaller parts, while before it was still connected to the second largest due to the single-link effect. | |||

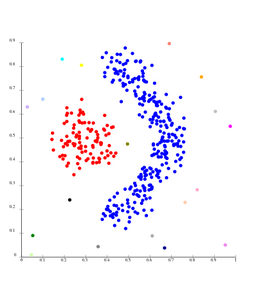

File:SLINK-density-data.svg|Single-linkage on density-based clusters. 20 clusters extracted, most of which contain single elements, since linkage clustering does not have a notion of "noise". | |||

</gallery> | |||

=== Centroid-based clustering === | |||

In centroid-based clustering, each cluster is represented by a central vector, which is not necessarily a member of the data set. When the number of clusters is fixed to ''k'', [[wikipedia:k-means clustering|''k''-means clustering]] gives a formal definition as an optimization problem: find the ''k'' cluster centers and assign the objects to the nearest cluster center, such that the squared distances from the cluster are minimized. | |||

The optimization problem itself is known to be [[wikipedia:NP-hard|NP-hard]], and thus the common approach is to search only for approximate solutions. A particularly well known approximate method is [[wikipedia:Lloyd's algorithm|Lloyd's algorithm]],<ref name="lloyd">{{Cite journal | last1 = Lloyd | first1 = S. | title = Least squares quantization in PCM | doi = 10.1109/TIT.1982.1056489 | journal = IEEE Transactions on Information Theory | volume = 28 | issue = 2 | pages = 129–137 | year = 1982 }}</ref> often just referred to as "''k-means algorithm''" (although [[wikipedia:k-means clustering#History|another algorithm introduced this name]]). It does however only find a [[wikipedia:local optimum|local optimum]], and is commonly run multiple times with different random initializations. Variations of ''k''-means often include such optimizations as choosing the best of multiple runs, but also restricting the centroids to members of the data set ([[wikipedia:K-medoids|''k''-medoids]]), choosing [[wikipedia:median|median]]s ([[wikipedia:k-medians clustering|''k''-medians clustering]]), choosing the initial centers less randomly ([[wikipedia:k-means++|''k''-means++]]) or allowing a fuzzy cluster assignment ([[wikipedia:Fuzzy clustering|fuzzy c-means]]). | |||

Most ''k''-means-type algorithms require the [[wikipedia:Determining the number of clusters in a data set|number of clusters]] – ''k'' – to be specified in advance, which is considered to be one of the biggest drawbacks of these algorithms. Furthermore, the algorithms prefer clusters of approximately similar size, as they will always assign an object to the nearest centroid. This often leads to incorrectly cut borders of clusters (which is not surprising since the algorithm optimizes cluster centers, not cluster borders). | |||

K-means has a number of interesting theoretical properties. First, it partitions the data space into a structure known as a [[wikipedia:Voronoi diagram|Voronoi diagram]]. Second, it is conceptually close to nearest neighbor classification, and as such is popular in [[wikipedia:machine learning|machine learning]]. Third, it can be seen as a variation of model based clustering, and Lloyd's algorithm as a variation of the [[wikipedia:Expectation-maximization algorithm|Expectation-maximization algorithm]] for this model discussed below. | |||

<gallery caption="''k''-means clustering examples" widths="300" heights="300" class="text-center"> | |||

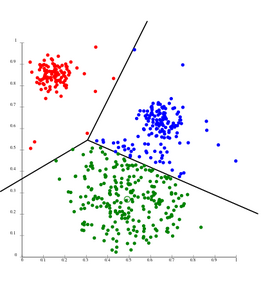

File:KMeans-Gaussian-data.svg|''k''-means separates data into Voronoi cells, which assumes equal-sized clusters (not adequate here) | |||

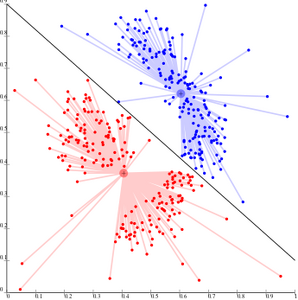

File:KMeans-density-data.svg|''k''-means cannot represent density-based clusters | |||

</gallery> | |||

Centroid-based clustering problems such as ''k''-means and ''k''-medoids are special cases of the uncapacitated, metric [[wikipedia:facility location problem|facility location problem]], a canonical problem in the operations research and computational geometry communities. In a basic facility location problem (of which there are numerous variants that model more elaborate settings), the task is to find the best warehouse locations to optimally service a given set of consumers. One may view "warehouses" as cluster centroids and "consumer locations" as the data to be clustered. This makes it possible to apply the well-developed algorithmic solutions from the facility location literature to the presently considered centroid-based clustering problem. | |||

==References== | |||

{{Reflist}} | |||

==Wikipedia References== | |||

*{{cite web |url = https://en.wikipedia.org/w/index.php?title=Cluster_analysis&oldid=1104837324|title=Cluster analysis | author = Wikipedia contributors |website= Wikipedia |publisher= Wikipedia |access-date = 17 August 2022 }} | |||

Revision as of 02:29, 19 August 2022

Cluster analysis or clustering is the task of grouping a set of objects in such a way that objects in the same group (called a cluster) are more similar (in some sense) to each other than to those in other groups (clusters). It is a main task of exploratory data analysis, and a common technique for statistical data analysis, used in many fields, including pattern recognition, image analysis, information retrieval, bioinformatics, data compression, computer graphics and machine learning.

Cluster analysis itself is not one specific algorithm, but the general task to be solved. It can be achieved by various algorithms that differ significantly in their understanding of what constitutes a cluster and how to efficiently find them. Popular notions of clusters include groups with small distances between cluster members, dense areas of the data space, intervals or particular statistical distributions. Clustering can therefore be formulated as a multi-objective optimization problem. The appropriate clustering algorithm and parameter settings (including parameters such as the distance function to use, a density threshold or the number of expected clusters) depend on the individual data set and intended use of the results. Cluster analysis as such is not an automatic task, but an iterative process of knowledge discovery or interactive multi-objective optimization that involves trial and failure. It is often necessary to modify data preprocessing and model parameters until the result achieves the desired properties.

Definition

The notion of a "cluster" cannot be precisely defined, which is one of the reasons why there are so many clustering algorithms.[1] There is a common denominator: a group of data objects. However, different researchers employ different cluster models, and for each of these cluster models again different algorithms can be given. The notion of a cluster, as found by different algorithms, varies significantly in its properties. Understanding these "cluster models" is key to understanding the differences between the various algorithms. Typical cluster models include:

| Cluster Model | Description/Example |

|---|---|

| Connectivity models | For example, hierarchical clustering builds models based on distance connectivity |

| Centroid models | For example, the k-means algorithm represents each cluster by a single mean vector |

| Distributional models | Clusters are modeled using statistical distributions, such as multivariate normal distributions used by the expectation-maximization algorithm |

| Density models | For example, DBSCAN and OPTICS defines clusters as connected dense regions in the data space |

| Subspace models | In biclustering (also known as co-clustering or two-mode-clustering), clusters are modeled with both cluster members and relevant attributes. |

| Group models | Some algorithms do not provide a refined model for their results and just provide the grouping information. |

| Graph-based models | A clique, that is, a subset of nodes in a graph such that every two nodes in the subset are connected by an edge can be considered as a prototypical form of cluster. Relaxations of the complete connectivity requirement (a fraction of the edges can be missing) are known as quasi-cliques, as in the HCS clustering algorithm. |

| Signed graph models | Every path in a signed graph has a sign from the product of the signs on the edges. Under the assumptions of balance theory, edges may change sign and result in a bifurcated graph. The weaker "clusterability axiom" (no cycle has exactly one negative edge) yields results with more than two clusters, or subgraphs with only positive edges.[2] |

| Neural models | The most well known unsupervised neural network is the self-organizing map and these models can usually be characterized as similar to one or more of the above models, and including subspace models when neural networks implement a form of Principal Component Analysis or Independent Component Analysis |

A "clustering" is essentially a set of such clusters, usually containing all objects in the data set. Additionally, it may specify the relationship of the clusters to each other, for example, a hierarchy of clusters embedded in each other. Clusterings can be roughly distinguished as:

| Type | Description |

|---|---|

| Hard clustering | Each object belongs to a cluster or not |

| Soft clustering | Each object belongs to each cluster to a certain degree (for example, a likelihood of belonging to the cluster) |

There are also finer distinctions possible, for example:

| Type | Description |

|---|---|

| Strict partitioning clustering | Each object belongs to exactly one cluster |

| Strict partitioning clustering with outliers | Objects can also belong to no cluster, and are considered outliers |

| Overlapping clustering (also: alternative clustering, multi-view clustering) | Objects may belong to more than one cluster; usually involving hard clusters |

| Hierarchical clustering | Objects that belong to a child cluster also belong to the parent cluster |

| Subspace clustering | While an overlapping clustering, within a uniquely defined subspace, clusters are not expected to overlap |

Algorithms

As listed above, clustering algorithms can be categorized based on their cluster model. The following overview will only list the most prominent examples of clustering algorithms, as there are possibly over 100 published clustering algorithms. Not all provide models for their clusters and can thus not easily be categorized.

There is no objectively "correct" clustering algorithm, but as it was noted, "clustering is in the eye of the beholder."[1] The most appropriate clustering algorithm for a particular problem often needs to be chosen experimentally, unless there is a mathematical reason to prefer one cluster model over another. An algorithm that is designed for one kind of model will generally fail on a data set that contains a radically different kind of model.[1] For example, k-means cannot find non-convex clusters.[1]

Connectivity-based clustering (hierarchical clustering)

Connectivity-based clustering, also known as hierarchical clustering, is based on the core idea of objects being more related to nearby objects than to objects farther away. These algorithms connect "objects" to form "clusters" based on their distance. A cluster can be described largely by the maximum distance needed to connect parts of the cluster. At different distances, different clusters will form, which can be represented using a dendrogram, which explains where the common name "hierarchical clustering" comes from: these algorithms do not provide a single partitioning of the data set, but instead provide an extensive hierarchy of clusters that merge with each other at certain distances. In a dendrogram, the y-axis marks the distance at which the clusters merge, while the objects are placed along the x-axis such that the clusters don't mix.

Connectivity-based clustering is a whole family of methods that differ by the way distances are computed. Apart from the usual choice of distance functions, the user also needs to decide on the linkage criterion (since a cluster consists of multiple objects, there are multiple candidates to compute the distance) to use. Popular choices are known as single-linkage clustering (the minimum of object distances), complete linkage clustering (the maximum of object distances), and UPGMA or WPGMA ("Unweighted or Weighted Pair Group Method with Arithmetic Mean", also known as average linkage clustering). Furthermore, hierarchical clustering can be agglomerative (starting with single elements and aggregating them into clusters) or divisive (starting with the complete data set and dividing it into partitions).

These methods will not produce a unique partitioning of the data set, but a hierarchy from which the user still needs to choose appropriate clusters. They are not very robust towards outliers, which will either show up as additional clusters or even cause other clusters to merge (known as "chaining phenomenon", in particular with single-linkage clustering). In the general case, the complexity is [math]\mathcal{O}(n^3)[/math] for agglomerative clustering and [math]\mathcal{O}(2^{n-1})[/math] for divisive clustering,[3] which makes them too slow for large data sets. For some special cases, optimal efficient methods (of complexity [math]\mathcal{O}(n^2)[/math]) are known: SLINK[4] for single-linkage and CLINK[5] for complete-linkage clustering.

- Linkage clustering examples

-

Single-linkage on Gaussian data. At 35 clusters, the biggest cluster starts fragmenting into smaller parts, while before it was still connected to the second largest due to the single-link effect.

-

Single-linkage on density-based clusters. 20 clusters extracted, most of which contain single elements, since linkage clustering does not have a notion of "noise".

Centroid-based clustering

In centroid-based clustering, each cluster is represented by a central vector, which is not necessarily a member of the data set. When the number of clusters is fixed to k, k-means clustering gives a formal definition as an optimization problem: find the k cluster centers and assign the objects to the nearest cluster center, such that the squared distances from the cluster are minimized.

The optimization problem itself is known to be NP-hard, and thus the common approach is to search only for approximate solutions. A particularly well known approximate method is Lloyd's algorithm,[6] often just referred to as "k-means algorithm" (although another algorithm introduced this name). It does however only find a local optimum, and is commonly run multiple times with different random initializations. Variations of k-means often include such optimizations as choosing the best of multiple runs, but also restricting the centroids to members of the data set (k-medoids), choosing medians (k-medians clustering), choosing the initial centers less randomly (k-means++) or allowing a fuzzy cluster assignment (fuzzy c-means).

Most k-means-type algorithms require the number of clusters – k – to be specified in advance, which is considered to be one of the biggest drawbacks of these algorithms. Furthermore, the algorithms prefer clusters of approximately similar size, as they will always assign an object to the nearest centroid. This often leads to incorrectly cut borders of clusters (which is not surprising since the algorithm optimizes cluster centers, not cluster borders).

K-means has a number of interesting theoretical properties. First, it partitions the data space into a structure known as a Voronoi diagram. Second, it is conceptually close to nearest neighbor classification, and as such is popular in machine learning. Third, it can be seen as a variation of model based clustering, and Lloyd's algorithm as a variation of the Expectation-maximization algorithm for this model discussed below.

- k-means clustering examples

-

k-means separates data into Voronoi cells, which assumes equal-sized clusters (not adequate here)

-

k-means cannot represent density-based clusters

Centroid-based clustering problems such as k-means and k-medoids are special cases of the uncapacitated, metric facility location problem, a canonical problem in the operations research and computational geometry communities. In a basic facility location problem (of which there are numerous variants that model more elaborate settings), the task is to find the best warehouse locations to optimally service a given set of consumers. One may view "warehouses" as cluster centroids and "consumer locations" as the data to be clustered. This makes it possible to apply the well-developed algorithmic solutions from the facility location literature to the presently considered centroid-based clustering problem.

References

- Jump up to: 1.0 1.1 1.2 1.3 Estivill-Castro, Vladimir (20 June 2002). "Why so many clustering algorithms – A Position Paper". ACM SIGKDD Explorations Newsletter 4 (1): 65–75. doi:.

- James A. Davis (May 1967) "Clustering and structural balance in graphs", Human Relations 20:181–7

- Everitt, Brian (2011). Cluster analysis. Chichester, West Sussex, U.K: Wiley. ISBN 9780470749913.

- Sibson, R. (1973). "SLINK: an optimally efficient algorithm for the single-link cluster method". The Computer Journal 16 (1): 30–34. British Computer Society. doi:.

- Defays, D. (1977). "An efficient algorithm for a complete link method". The Computer Journal 20 (4): 364–366. British Computer Society. doi:.

- "Least squares quantization in PCM" (1982). IEEE Transactions on Information Theory 28 (2): 129–137. doi:.

Wikipedia References

- Wikipedia contributors. "Cluster analysis". Wikipedia. Wikipedia. Retrieved 17 August 2022.