guide:Ec36399528: Difference between revisions

No edit summary |

mNo edit summary |

||

| Line 131: | Line 131: | ||

\leavevmode\unskip\penalty9999 \hbox{}\nobreak\hfill | \leavevmode\unskip\penalty9999 \hbox{}\nobreak\hfill | ||

\quad\hbox{#1}} | \quad\hbox{#1}} | ||

\newcommand | \newcommand{\xqed{$\triangle$}} | ||

\newcommand\independent{\protect\mathpalette{\protect\independenT}{\perp}} | \newcommand\independent{\protect\mathpalette{\protect\independenT}{\perp}} | ||

\DeclareMathOperator{\Int}{Int} | \DeclareMathOperator{\Int}{Int} | ||

| Line 147: | Line 147: | ||

</math> | </math> | ||

</div> | </div> | ||

The literature reviewed in this chapter starts with the analysis of what can be learned about functionals of probability distributions that are well-defined in the absence of a model. | The literature reviewed in this chapter starts with the analysis of what can be learned about functionals of probability distributions that are well-defined in the absence of a model. | ||

The approach is nonparametric, and it is typically ''constructive'', in the sense that it leads to ‘`plug-in" formulae for the bounds on the functionals of interest. | The approach is nonparametric, and it is typically ''constructive'', in the sense that it leads to ‘`plug-in" formulae for the bounds on the functionals of interest. | ||

| Line 159: | Line 159: | ||

The question is what can the researcher learn about <math>\E_\sQ(\ey|\ex=x)</math>, with <math>\sQ</math> the distribution of <math>(\ey,\ex)</math>? | The question is what can the researcher learn about <math>\E_\sQ(\ey|\ex=x)</math>, with <math>\sQ</math> the distribution of <math>(\ey,\ex)</math>? | ||

<ref name="man89"></ref> showed that <math>\E_\sQ(\ey|\ex=x)</math> is not point identified in the absence of additional assumptions, but informative nonparametric bounds on this quantity can be obtained. | <ref name="man89"></ref> showed that <math>\E_\sQ(\ey|\ex=x)</math> is not point identified in the absence of additional assumptions, but informative nonparametric bounds on this quantity can be obtained. | ||

In this section I review his approach, and discuss several important extensions of his original idea. | In this section I review his approach, and discuss several important extensions of his original idea. | ||

Throughout the chapter, I formally state the structure of the problem under study as an ‘`Identification Problem", and then provide a solution, either in the form of a sharp identification region, or of an outer region. | Throughout the chapter, I formally state the structure of the problem under study as an ‘`Identification Problem", and then provide a solution, either in the form of a sharp identification region, or of an outer region. | ||

To set the stage, and at the cost of some repetition, I do the same here, slightly generalizing the question stated in the previous paragraph. | To set the stage, and at the cost of some repetition, I do the same here, slightly generalizing the question stated in the previous paragraph. | ||

{{proofcard|Identification Problem (Conditional Expectation of Selectively Observed Data)|IP:bounds:mean:md| | |||

Let <math>\ey \in \mathcal{Y}\subset \R</math> and <math>\ex \in \mathcal{X}\subset \R^d</math> be, respectively, an outcome variable and a vector of covariates with support <math>\cY</math> and <math>\cX</math> respectively, with <math>\cY</math> a compact set. | Let <math>\ey \in \mathcal{Y}\subset \R</math> and <math>\ex \in \mathcal{X}\subset \R^d</math> be, respectively, an outcome variable and a vector of covariates with support <math>\cY</math> and <math>\cX</math> respectively, with <math>\cY</math> a compact set. | ||

Let <math>\ed \in \{0,1\}</math>. | Let <math>\ed \in \{0,1\}</math>. | ||

| Line 171: | Line 170: | ||

Let <math>y_{j}\in\cY</math> be such that <math>g(y_j)=g_j</math>, <math>j=0,1</math>.<ref group="Notes" >The bounds <math>g_0,g_1</math> and the values <math>y_0,y_1</math> at which they are attained may differ for different functions <math>g(\cdot)</math>.</ref> | Let <math>y_{j}\in\cY</math> be such that <math>g(y_j)=g_j</math>, <math>j=0,1</math>.<ref group="Notes" >The bounds <math>g_0,g_1</math> and the values <math>y_0,y_1</math> at which they are attained may differ for different functions <math>g(\cdot)</math>.</ref> | ||

In the absence of additional information, what can the researcher learn about <math>\E_\sQ(g(\ey)|\ex=x)</math>, with <math>\sQ</math> the distribution of <math>(\ey,\ex)</math>? | In the absence of additional information, what can the researcher learn about <math>\E_\sQ(g(\ey)|\ex=x)</math>, with <math>\sQ</math> the distribution of <math>(\ey,\ex)</math>? | ||

|}} | |||

<ref name="man89"></ref>’s analysis of this problem begins with a simple application of the law of total probability, that yields | <ref name="man89"></ref>’s analysis of this problem begins with a simple application of the law of total probability, that yields | ||

| Line 184: | Line 183: | ||

Hence, <math>\sQ(\ey|\ex=x)</math> is not point identified. | Hence, <math>\sQ(\ey|\ex=x)</math> is not point identified. | ||

If one were to assume ''exogenous selection'' (or data missing at random conditional on <math>\ex</math>), i.e., <math>\sR(\ey|\ex,\ed=0)=\sP(\ey|\ex,\ed=1)</math>, point identification would obtain. | If one were to assume ''exogenous selection'' (or data missing at random conditional on <math>\ex</math>), i.e., <math>\sR(\ey|\ex,\ed=0)=\sP(\ey|\ex,\ed=1)</math>, point identification would obtain. | ||

However, that assumption is non-refutable and it is well known that it may fail in [[guide:7b0105e1fc#sec:misspec | | However, that assumption is non-refutable and it is well known that it may fail in applications <ref group="Notes" >[[guide:7b0105e1fc#sec:misspec |Section]] discusses the consequences of model misspecification (with respect to refutable assumptions)</ref>. | ||

Let <math>\cT</math> denote the space of all probability measures with support in <math>\cY</math>. The unknown functional vector is <math>\{\tau(x),\upsilon(x)\}\equiv \{\sQ(\ey|\ex=x),\sR(\ey|\ex=x,\ed=0)\}</math>. | Let <math>\cT</math> denote the space of all probability measures with support in <math>\cY</math>. The unknown functional vector is <math>\{\tau(x),\upsilon(x)\}\equiv \{\sQ(\ey|\ex=x),\sR(\ey|\ex=x,\ed=0)\}</math>. | ||

What the researcher can learn, in the absence of additional restrictions on <math>\sR(\ey|\ex=x,\ed=0)</math>, is the region of ''observationally equivalent'' distributions for <math>\ey|\ex=x</math>, and the associated set of expectations taken with respect to these distributions. | What the researcher can learn, in the absence of additional restrictions on <math>\sR(\ey|\ex=x,\ed=0)</math>, is the region of ''observationally equivalent'' distributions for <math>\ey|\ex=x</math>, and the associated set of expectations taken with respect to these distributions. | ||

{{proofcard|Theorem (Conditional Expectations of Selectively Observed Data)|SIR:prob:E:md|Under the assumptions in Identification [[#IP:bounds:mean:md |Problem]], | |||

Under the assumptions in Identification [[#IP:bounds:mean:md |Problem]], | |||

<math display="block"> | <math display="block"> | ||

| Line 198: | Line 196: | ||

</math> | </math> | ||

is the sharp identification region for <math>\E_\sQ(g(\ey)|\ex=x)</math>. | is the sharp identification region for <math>\E_\sQ(g(\ey)|\ex=x)</math>. | ||

| | |||

Due to the discussion following equation \eqref{eq:LTP_md}, the collection of observationally equivalent distribution functions for <math>\ey|\ex=x</math> is | Due to the discussion following equation \eqref{eq:LTP_md}, the collection of observationally equivalent distribution functions for <math>\ey|\ex=x</math> is | ||

| Line 210: | Line 208: | ||

Next, observe that the lower bound in equation \eqref{eq:bounds:mean:md} is achieved by integrating <math>g(\ey)</math> against the distribution <math>\tau(x)</math> that results when <math>\upsilon(x)</math> places probability one on <math>y_0</math>. The upper bound is achieved by integrating <math>g(\ey)</math> against the distribution <math>\tau(x)</math> that results when <math>\upsilon(x)</math> places probability one on <math>y_1</math>. | Next, observe that the lower bound in equation \eqref{eq:bounds:mean:md} is achieved by integrating <math>g(\ey)</math> against the distribution <math>\tau(x)</math> that results when <math>\upsilon(x)</math> places probability one on <math>y_0</math>. The upper bound is achieved by integrating <math>g(\ey)</math> against the distribution <math>\tau(x)</math> that results when <math>\upsilon(x)</math> places probability one on <math>y_1</math>. | ||

Both are contained in the set <math>\idr{\sQ(\ey|\ex=x)}</math> in equation \eqref{eq:Tau_md}. | Both are contained in the set <math>\idr{\sQ(\ey|\ex=x)}</math> in equation \eqref{eq:Tau_md}. | ||

}} | |||

These are the ''worst case bounds'', so called because assumptions free and therefore representing the widest possible range of values for the parameter of interest that are consistent with the observed data. | These are the ''worst case bounds'', so called because assumptions free and therefore representing the widest possible range of values for the parameter of interest that are consistent with the observed data. | ||

A simple ‘`plug-in" estimator for <math>\idr{\E_\sQ(g(\ey)|\ex=x)}</math> replaces all unknown quantities in \eqref{eq:bounds:mean:md} with consistent estimators, obtained, e.g., by kernel or sieve regression. | A simple ‘`plug-in" estimator for <math>\idr{\E_\sQ(g(\ey)|\ex=x)}</math> replaces all unknown quantities in \eqref{eq:bounds:mean:md} with consistent estimators, obtained, e.g., by kernel or sieve regression. | ||

| Line 244: | Line 242: | ||

<ref name="sto10"></ref> further extends partial identification analysis to the study of spread parameters in the presence of missing data (as well as interval data, data combinations, and other applications). | <ref name="sto10"></ref> further extends partial identification analysis to the study of spread parameters in the presence of missing data (as well as interval data, data combinations, and other applications). | ||

These parameters include ones that respect second order stochastic dominance, such as the variance, the Gini coefficient, and other inequality measures, as well as other measures of dispersion which do not respect second order stochastic dominance, such as interquartile range and ratio.<ref group="Notes" > | These parameters include ones that respect second order stochastic dominance, such as the variance, the Gini coefficient, and other inequality measures, as well as other measures of dispersion which do not respect second order stochastic dominance, such as interquartile range and ratio.<ref group="Notes" > | ||

Earlier related work includes, e.g., | Earlier related work includes, e.g., {{ref|name=gas72}} and {{ref|name=cow91}}, who obtain worst case bounds on the sample Gini coefficient under the assumption that one knows the income bracket but not the exact income of every household.</ref> | ||

<ref name="sto10"></ref> shows that the sharp identification region for these parameters can be obtained by fixing the mean or quantile of the variable of interest at a specific value within its sharp identification region, and deriving a distribution consistent with this value which is ``compressed" with respect to the ones which bound the cumulative distribution function (CDF) of the variable of interest, and one which is ``dispersed" with respect to them. | <ref name="sto10"></ref> shows that the sharp identification region for these parameters can be obtained by fixing the mean or quantile of the variable of interest at a specific value within its sharp identification region, and deriving a distribution consistent with this value which is ``compressed" with respect to the ones which bound the cumulative distribution function (CDF) of the variable of interest, and one which is ``dispersed" with respect to them. | ||

Heuristically, the compressed distribution minimizes spread, while the dispersed one maximizes it (the sense in which this optimization occurs is formally defined in the paper). | Heuristically, the compressed distribution minimizes spread, while the dispersed one maximizes it (the sense in which this optimization occurs is formally defined in the paper). | ||

| Line 251: | Line 249: | ||

The main results of the paper are sharp identification regions for the expectation and variance, for the median and interquartile ratio, and for many other combinations of parameters. | The main results of the paper are sharp identification regions for the expectation and variance, for the median and interquartile ratio, and for many other combinations of parameters. | ||

'''Key Insight (Identification is not a binary event):''' | |||

<span id="big_idea:id_not_binary"/> | |||

Identification [[#IP:bounds:mean:md |Problem]] is mathematically simple, but it puts forward a new approach to empirical research. | <i>Identification [[#IP:bounds:mean:md |Problem]] is mathematically simple, but it puts forward a new approach to empirical research. | ||

The traditional approach aims at finding a sufficient (possibly minimal) set of assumptions guaranteeing point identification of parameters, viewing identification as an | The traditional approach aims at finding a sufficient (possibly minimal) set of assumptions guaranteeing point identification of parameters, viewing identification as an “all or nothing” notion, where either the functional of interest can be learned exactly or nothing of value can be learned. | ||

The partial identification approach pioneered by <ref name="man89"></ref> points out that much can be learned from combination of data and assumptions that restrict the functionals of interest to a set of observationally equivalent values, even if this set is not a singleton. | The partial identification approach pioneered by <ref name="man89"></ref> points out that much can be learned from combination of data and assumptions that restrict the functionals of interest to a set of observationally equivalent values, even if this set is not a singleton. | ||

Along the way, <ref name="man89"></ref> points out that in Identification [[#IP:bounds:mean:md |Problem]] the observed outcome is the singleton <math>\ey</math> when <math>\ed=1</math>, and the set <math>\cY</math> when <math>\ed=0</math>. | Along the way, <ref name="man89"></ref> points out that in Identification [[#IP:bounds:mean:md |Problem]] the observed outcome is the singleton <math>\ey</math> when <math>\ed=1</math>, and the set <math>\cY</math> when <math>\ed=0</math>. | ||

This is a random closed set, see [[guide:379e0dcd67#def:rcs |Definition]]. | This is a random closed set, see [[guide:379e0dcd67#def:rcs |Definition]]. | ||

I return to this connection in Section [[#subsec:interval_data |Interval Data]]. | I return to this connection in Section [[#subsec:interval_data |Interval Data]].</i> | ||

Despite how transparent the framework in Identification [[#IP:bounds:mean:md |Problem]] is, important subtleties arise even in this seemingly simple context. | Despite how transparent the framework in Identification [[#IP:bounds:mean:md |Problem]] is, important subtleties arise even in this seemingly simple context. | ||

For a given <math>t\in\R</math>, consider the function <math>g(\ey)=\one(\ey\le t)</math>, with <math>\one(A)</math> the indicator function taking the value one if the logical condition in parentheses holds and zero otherwise. | For a given <math>t\in\R</math>, consider the function <math>g(\ey)=\one(\ey\le t)</math>, with <math>\one(A)</math> the indicator function taking the value one if the logical condition in parentheses holds and zero otherwise. | ||

| Line 287: | Line 285: | ||

is an outer region for the CDF of <math>\ey|\ex=x</math>. | is an outer region for the CDF of <math>\ey|\ex=x</math>. | ||

\end{OR} | \end{OR} | ||

| | |||

Any admissible CDF for <math>\ey|\ex=x</math> belongs to the family of functions in equation \eqref{eq:outer_cdf_md}. However, the bound in equation \eqref{eq:outer_cdf_md} does not impose the restriction that for any <math>t_0\le t_1</math>, | Any admissible CDF for <math>\ey|\ex=x</math> belongs to the family of functions in equation \eqref{eq:outer_cdf_md}. However, the bound in equation \eqref{eq:outer_cdf_md} does not impose the restriction that for any <math>t_0\le t_1</math>, | ||

| Line 297: | Line 295: | ||

</math> | </math> | ||

This restriction is implied by the maintained assumptions, but is not necessarily satisfied by all CDFs in <math>\outr{\sF(\ey|\ex=x)}</math>, as illustrated in the following simple example. | This restriction is implied by the maintained assumptions, but is not necessarily satisfied by all CDFs in <math>\outr{\sF(\ey|\ex=x)}</math>, as illustrated in the following simple example. | ||

}} | |||

<div id="fig:boundsCDF:md" class="d-flex justify-content-center"> | <div id="fig:boundsCDF:md" class="d-flex justify-content-center"> | ||

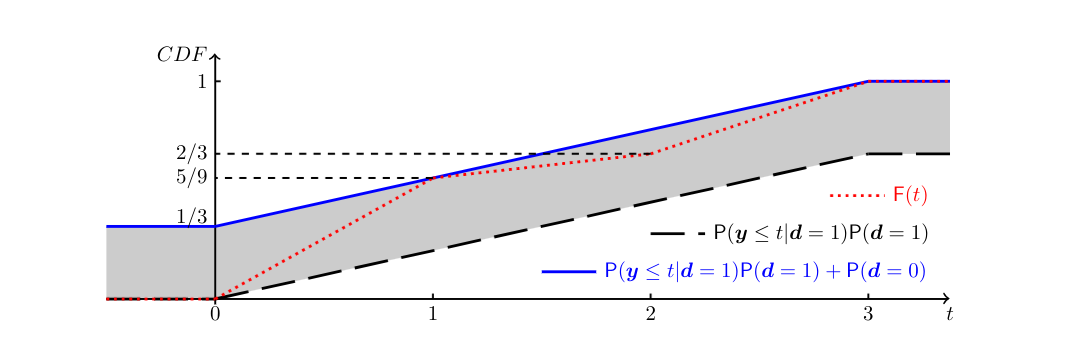

[[File:guide_d9532_fig_boundsCDF_md.png | | [[File:guide_d9532_fig_boundsCDF_md.png | 700px | thumb | The tube defined by inequalities \eqref{eq:pointwise_bounds_F_md} in the set-up of [[#example:CDF_md |Example]], and the CDF in \eqref{eq:CDF_counterexample_md}. ]] | ||

</div> | </div> | ||

<span id="example:CDF_md"/> | |||

'''Example''' | |||

Omit <math>\ex</math> for simplicity, let <math>\sP(\ed=1)=\frac{2}{3}</math>, and let | Omit <math>\ex</math> for simplicity, let <math>\sP(\ed=1)=\frac{2}{3}</math>, and let | ||

| Line 316: | Line 316: | ||

\end{align*} | \end{align*} | ||

</math> | </math> | ||

The bounding functions and associated tube from the inequalities in \eqref{eq:pointwise_bounds_F_md} are depicted in [[#fig:boundsCDF:md|Figure]]. | The bounding functions and associated tube from the inequalities in \eqref{eq:pointwise_bounds_F_md} are depicted in [[#fig:boundsCDF:md|Figure]]. | ||

Consider the cumulative distribution function | Consider the cumulative distribution function | ||

| Line 336: | Line 337: | ||

For each <math>t\in\R</math>, <math>\sF(t)</math> lies in the tube defined by equation \eqref{eq:pointwise_bounds_F_md}. | For each <math>t\in\R</math>, <math>\sF(t)</math> lies in the tube defined by equation \eqref{eq:pointwise_bounds_F_md}. | ||

However, it cannot be the CDF of <math>\ey</math>, because <math>\sF(2)-\sF(1)=\frac{1}{9} < \sP(1\le\ey\le 2|\ed=1)\sP(\ed=1)</math>, directly contradicting equation \eqref{eq:CDF_md_Kinterval}. | However, it cannot be the CDF of <math>\ey</math>, because <math>\sF(2)-\sF(1)=\frac{1}{9} < \sP(1\le\ey\le 2|\ed=1)\sP(\ed=1)</math>, directly contradicting equation \eqref{eq:CDF_md_Kinterval}. | ||

\end{examp} | \end{examp} | ||

How can one characterize the sharp identification region for the CDF of <math>\ey|\ex=x</math> under the assumptions in Identification [[#IP:bounds:mean:md |Problem]]? | How can one characterize the sharp identification region for the CDF of <math>\ey|\ex=x</math> under the assumptions in Identification [[#IP:bounds:mean:md |Problem]]? | ||

In general, there is not a single answer to this question: different methodologies can be used. | In general, there is not a single answer to this question: different methodologies can be used. | ||

Here I use results in <ref name="man03"></ref>{{rp|at=Corollary 1.3.1}} and <ref name="mol:mol18"></ref>{{rp|at=Theorem 2.25}}, which yield an alternative characterization of <math>\idr{\sQ(\ey|\ex=x)}</math> that translates directly into a characterization of <math>\idr{\sF(\ey|\ex=x)}</math>.<ref group="Notes" >Whereas | Here I use results in <ref name="man03"></ref>{{rp|at=Corollary 1.3.1}} and <ref name="mol:mol18"></ref>{{rp|at=Theorem 2.25}}, which yield an alternative characterization of <math>\idr{\sQ(\ey|\ex=x)}</math> that translates directly into a characterization of <math>\idr{\sF(\ey|\ex=x)}</math>.<ref group="Notes" >Whereas {{ref|name=man94}} is very clear that the collection of CDFs in \eqref{eq:pointwise_bounds_F_md} is an outer region for the CDF of <math>\ey|\ex=x</math>, and {{ref|name=man03}} provides the sharp characterization in \eqref{eq:sharp_id_P_md_Manski}, {{ref|name=man07a}}{{rp|at=p. 39}} does not state all the requirements that characterize <math>\idr{\sF(\ey|\ex=x)}</math>.</ref> | ||

{{proofcard|Theorem (Conditional Distribution and CDF of Selectively Observed Data)|SIR:CDF_md| | |||

Given <math>\tau\in\cT</math>, let <math>\tau_K(x)</math> denote the probability that distribution <math>\tau</math> assigns to set <math>K</math> conditional on <math>\ex=x</math>, with <math>\tau_y(x)\equiv\tau_{\{y\}}(x)</math>. | Given <math>\tau\in\cT</math>, let <math>\tau_K(x)</math> denote the probability that distribution <math>\tau</math> assigns to set <math>K</math> conditional on <math>\ex=x</math>, with <math>\tau_y(x)\equiv\tau_{\{y\}}(x)</math>. | ||

Under the assumptions in Identification [[#IP:bounds:mean:md |Problem]], | Under the assumptions in Identification [[#IP:bounds:mean:md |Problem]], | ||

| Line 367: | Line 367: | ||

\end{multline} | \end{multline} | ||

</math> | </math> | ||

| | |||

The characterization in \eqref{eq:sharp_id_P_md_Manski} follows from equation \eqref{eq:Tau_md}, observing that if <math>\tau(x)\in\idr{\sQ(\ey|\ex=x)}</math> as defined in equation \eqref{eq:Tau_md}, then there exists a distribution <math>\upsilon(x)\in\cT</math> such that <math>\tau(x) = \sP(\ey|\ex=x,\ed=1)\sP(\ed=1|\ex=x)+\upsilon(x)\sP(\ed=0|\ex=x)</math>. | The characterization in \eqref{eq:sharp_id_P_md_Manski} follows from equation \eqref{eq:Tau_md}, observing that if <math>\tau(x)\in\idr{\sQ(\ey|\ex=x)}</math> as defined in equation \eqref{eq:Tau_md}, then there exists a distribution <math>\upsilon(x)\in\cT</math> such that <math>\tau(x) = \sP(\ey|\ex=x,\ed=1)\sP(\ed=1|\ex=x)+\upsilon(x)\sP(\ed=0|\ex=x)</math>. | ||

Hence, by construction <math>\tau_K(x) \ge \sP(\ey\in K|\ex=x,\ed=1)\sP(\ed=1|\ex=x)</math>, <math>\forall K\subset \cY</math>. Conversely, if one has <math>\tau_K(x) \ge \sP(\ey\in K|\ex=x,\ed=1)\sP(\ed=1|\ex=x)</math>, <math>\forall K\subset \cY</math>, one can define <math>\upsilon(x)=\frac{\tau(x) - \sP(\ey|\ex=x,\ed=1)\sP(\ed=1|\ex=x)}{\sP(\ed=0|\ex=x)}</math>. | Hence, by construction <math>\tau_K(x) \ge \sP(\ey\in K|\ex=x,\ed=1)\sP(\ed=1|\ex=x)</math>, <math>\forall K\subset \cY</math>. Conversely, if one has <math>\tau_K(x) \ge \sP(\ey\in K|\ex=x,\ed=1)\sP(\ed=1|\ex=x)</math>, <math>\forall K\subset \cY</math>, one can define <math>\upsilon(x)=\frac{\tau(x) - \sP(\ey|\ex=x,\ed=1)\sP(\ed=1|\ex=x)}{\sP(\ed=0|\ex=x)}</math>. | ||

| Line 382: | Line 382: | ||

The result in equation \eqref{eq:sharp_id_P_md_interval} is proven in <ref name="mol:mol18"></ref>{{rp|at=Theorem 2.25}} using elements of random set theory, to which I return in Section [[#subsec:interval_data |Interval Data]]. | The result in equation \eqref{eq:sharp_id_P_md_interval} is proven in <ref name="mol:mol18"></ref>{{rp|at=Theorem 2.25}} using elements of random set theory, to which I return in Section [[#subsec:interval_data |Interval Data]]. | ||

Using elements of random set theory it is also possible to show that the characterization in \eqref{eq:sharp_id_P_md_Manski} requires only to check the inequalities for <math>K</math> the compact subsets of <math>\cY</math>. | Using elements of random set theory it is also possible to show that the characterization in \eqref{eq:sharp_id_P_md_Manski} requires only to check the inequalities for <math>K</math> the compact subsets of <math>\cY</math>. | ||

}} | |||

This section provides sharp identification regions and outer regions for a variety of functionals of interest. | This section provides sharp identification regions and outer regions for a variety of functionals of interest. | ||

The computational complexity of these characterizations varies widely. | The computational complexity of these characterizations varies widely. | ||

| Line 398: | Line 398: | ||

Examples include the ''local average treatment effect'' of <ref name="imb:ang94"></ref> and the ''marginal treatment effect'' of | Examples include the ''local average treatment effect'' of <ref name="imb:ang94"></ref> and the ''marginal treatment effect'' of | ||

<ref name="hec:vyt99"></ref><ref name="hec:vyt01"></ref><ref name="hec:vyt05"></ref>. | <ref name="hec:vyt99"></ref><ref name="hec:vyt01"></ref><ref name="hec:vyt05"></ref>. | ||

For thorough discussions of the literature on program evaluation, I refer to the textbook treatments in <ref name="man95"></ref><ref name="man03"></ref><ref name="man07a"></ref> and <ref name="imb:rub15"></ref>, to the Handbook chapters by <ref name="hec:vyt07I"></ref><ref name="hec:vyt07II"></ref> and <ref name="abb:hec07"></ref>, and to the review articles by <ref name="imb:woo09"></ref> and <ref name="mog:tor18"></ref>. | For thorough discussions of the literature on program evaluation, I refer to the textbook treatments in <ref name="man95"></ref><ref name="man03"></ref><ref name="man07a"></ref> and <ref name="imb:rub15"></ref>, to the Handbook chapters by <ref name="hec:vyt07I"></ref><ref name="hec:vyt07II"></ref> and <ref name="abb:hec07"></ref>, and to the review articles by <ref name="imb:woo09"></ref> and <ref name="mog:tor18"></ref>. | ||

Using standard notation (e.g., <ref name="ney23"></ref>), let <math>\ey:\T \mapsto \cY</math> be an individual-specific response function, with <math>\T=\{0,1,\dots,T\}</math> a finite set of mutually exclusive and exhaustive treatments, and let <math>\es</math> denote the individual's received treatment (taking its realizations in <math>\T</math>).<ref group="Notes" >Here the treatment response is a function only of the (scalar) treatment received by the given individual, an assumption known as ''stable unit treatment value assumption'' | |||

Using standard notation (e.g., <ref name="ney23"></ref>), let <math>\ey:\T \mapsto \cY</math> be an individual-specific response function, with <math>\T=\{0,1,\dots,T\}</math> a finite set of mutually exclusive and exhaustive treatments, and let <math>\es</math> denote the individual's received treatment (taking its realizations in <math>\T</math>).<ref group="Notes" >Here the treatment response is a function only of the (scalar) treatment received by the given individual, an assumption known as ''stable unit treatment value assumption'' {{ref|name=rub78}}.</ref> | |||

The researcher observes data <math>(\ey,\es,\ex)\sim\sP</math>, with <math>\ey\equiv\ey(\es)</math> the outcome corresponding to the received treatment <math>\es</math>, and <math>\ex</math> a vector of covariates. | The researcher observes data <math>(\ey,\es,\ex)\sim\sP</math>, with <math>\ey\equiv\ey(\es)</math> the outcome corresponding to the received treatment <math>\es</math>, and <math>\ex</math> a vector of covariates. | ||

The outcome <math>\ey(t)</math> for <math>\es\neq t</math> is counterfactual, and hence can be conceptualized as missing. | The outcome <math>\ey(t)</math> for <math>\es\neq t</math> is counterfactual, and hence can be conceptualized as missing. | ||

Therefore, we are in the framework of Identification [[#IP:bounds:mean:md |Problem]] and all the results from Section [[#subsec:missing_data |Selectively Observed Data]] apply in this context too, subject to adjustments in notation.<ref group="Notes" > | Therefore, we are in the framework of Identification [[#IP:bounds:mean:md |Problem]] and all the results from Section [[#subsec:missing_data |Selectively Observed Data]] apply in this context too, subject to adjustments in notation.<ref group="Notes" >{{ref|name=ber:mol:mol12}} and {{ref|name=mol:mol18}}{{rp|at=Section 2.5}} provide a characterization of the sharp identification region for the joint distribution of <math>[\ey(t),t\in\T]</math>.</ref> | ||

For example, using Theorem [[#SIR:prob:E:md |SIR-]], | For example, using Theorem [[#SIR:prob:E:md |SIR-]], | ||

| Line 431: | Line 432: | ||

The resulting bounds have width equal to <math>(y_1-y_0)[2-\sP(\es=t_1|\ex=x)-\sP(\es=t_0|\ex=x)]\in[(y_1-y_0),2(y_1-y_0)]</math>, and hence are informative only if both <math>y_0 > -\infty</math> and <math>y_1 < \infty</math>. | The resulting bounds have width equal to <math>(y_1-y_0)[2-\sP(\es=t_1|\ex=x)-\sP(\es=t_0|\ex=x)]\in[(y_1-y_0),2(y_1-y_0)]</math>, and hence are informative only if both <math>y_0 > -\infty</math> and <math>y_1 < \infty</math>. | ||

As the largest logically possible value for the ATE (in the absence of information from data) cannot be larger than <math>(y_1-y_0)</math>, and the smallest cannot be smaller than <math>-(y_1-y_0)</math>, the sharp bounds on the ATE always cover zero. | As the largest logically possible value for the ATE (in the absence of information from data) cannot be larger than <math>(y_1-y_0)</math>, and the smallest cannot be smaller than <math>-(y_1-y_0)</math>, the sharp bounds on the ATE always cover zero. | ||

How should one think about the finding on the size of the worst case bounds on the ATE? | '''Key Insight:''' <i>How should one think about the finding on the size of the worst case bounds on the ATE? | ||

On the one hand, if both <math>y_0 < \infty</math> and <math>y_1 < \infty</math> the bounds are informative, because they are a strict subset of the ATE's possible realizations. | On the one hand, if both <math>y_0 < \infty</math> and <math>y_1 < \infty</math> the bounds are informative, because they are a strict subset of the ATE's possible realizations. | ||

On the other hand, they reveal that the data alone are silent on the sign of the ATE. | On the other hand, they reveal that the data alone are silent on the sign of the ATE. | ||

This means that assumptions play a crucial role in delivering stronger conclusions about this policy relevant parameter. | This means that assumptions play a crucial role in delivering stronger conclusions about this policy relevant parameter. | ||

The partial identification approach to empirical research recommends that as assumptions are added to the analysis, one systematically reports how each contributes to shrinking the bounds, making transparent their role in shaping inference. | The partial identification approach to empirical research recommends that as assumptions are added to the analysis, one systematically reports how each contributes to shrinking the bounds, making transparent their role in shaping inference. </i> | ||

What assumptions may researchers bring to bear to learn more about treatment effects of interest? | What assumptions may researchers bring to bear to learn more about treatment effects of interest? | ||

The literature has provided a wide array of well motivated and useful restrictions. | The literature has provided a wide array of well motivated and useful restrictions. | ||

| Line 478: | Line 479: | ||

The second example of assumptions used to tighten worst case bounds is that of ''exclusion restrictions'', as in, e.g., <ref name="man90"></ref>. | The second example of assumptions used to tighten worst case bounds is that of ''exclusion restrictions'', as in, e.g., <ref name="man90"></ref>. | ||

Suppose the researcher observes a random variable <math>\ez</math>, taking its realizations in <math>\cZ</math>, such that<ref group="Notes" >Stronger exclusion restrictions include statistical independence of the response function at each <math>t</math> with <math>\ez</math>: <math>\sQ(\ey(t)|\ez,\ex)=\sQ(\ey(t)|\ex)~\forall t \in\T,~\ex</math>-a.s.; and statistical independence of the entire response function with <math>\ez</math>: <math>\sQ([\ey(t),t \in\T]|\ez,\ex)=\sQ([\ey(t),t \in\T]|\ex),~\ex</math>-a.s. | Suppose the researcher observes a random variable <math>\ez</math>, taking its realizations in <math>\cZ</math>, such that<ref group="Notes" >Stronger exclusion restrictions include statistical independence of the response function at each <math>t</math> with <math>\ez</math>: <math>\sQ(\ey(t)|\ez,\ex)=\sQ(\ey(t)|\ex)~\forall t \in\T,~\ex</math>-a.s.; and statistical independence of the entire response function with <math>\ez</math>: <math>\sQ([\ey(t),t \in\T]|\ez,\ex)=\sQ([\ey(t),t \in\T]|\ex),~\ex</math>-a.s. | ||

Examples of partial identification analysis under these conditions can be found in | Examples of partial identification analysis under these conditions can be found in {{ref|name=bal:pea97}}, {{ref|name=man03}}, {{ref|name=kit09}}, {{ref|name=ber:mol:mol12}}, {{ref|name=mac:sha:vyt18}}, and many others.</ref> | ||

<math display="block"> | <math display="block"> | ||

| Line 500: | Line 501: | ||

If the instrument affects the probability of being selected into treatment, or the average outcome for the subpopulation receiving treatment <math>t</math>, the bounds on <math>\E_\sQ(\ey(t)|\ex=x)</math> shrink. | If the instrument affects the probability of being selected into treatment, or the average outcome for the subpopulation receiving treatment <math>t</math>, the bounds on <math>\E_\sQ(\ey(t)|\ex=x)</math> shrink. | ||

If the bounds are empty, the mean independence assumption can be refuted (see [[guide:7b0105e1fc#sec:misspec |Section]] for a discussion of misspecification in partial identification). | If the bounds are empty, the mean independence assumption can be refuted (see [[guide:7b0105e1fc#sec:misspec |Section]] for a discussion of misspecification in partial identification). | ||

<ref name="man:pep00"></ref><ref name="man:pep09"></ref> generalize the notion of instrumental variable to ''monotone'' instrumental variable, and show how these can be used to obtain tighter bounds on treatment effect parameters.<ref group="Notes" >See | <ref name="man:pep00"></ref><ref name="man:pep09"></ref> generalize the notion of instrumental variable to ''monotone'' instrumental variable, and show how these can be used to obtain tighter bounds on treatment effect parameters.<ref group="Notes" >See {{ref|name=che:ros19}}{{rp|at=Chapter XXX in this Volume}} for further discussion.</ref> | ||

They also show how shape restrictions and exclusion restrictions can jointly further tighten the bounds. | They also show how shape restrictions and exclusion restrictions can jointly further tighten the bounds. | ||

<ref name="man13social"></ref> generalizes these findings to the case where treatment response may have social interactions -- that is, each individual's outcome depends on the treatment received by all other individuals. | <ref name="man13social"></ref> generalizes these findings to the case where treatment response may have social interactions -- that is, each individual's outcome depends on the treatment received by all other individuals. | ||

| Line 513: | Line 514: | ||

Other instances abound. | Other instances abound. | ||

Here I focus first on the case of interval outcome data. | Here I focus first on the case of interval outcome data. | ||

{{proofcard|Identification Problem (Interval Outcome Data)|IP:interval_outcome|Assume that in addition to being compact, either <math>\cY</math> is countable or <math>\cY=[y_0,y_1]</math>, with <math>y_0=\min_{y\in\cY}y</math> and <math>y_1=\max_{y\in\cY}y</math>. | |||

Let <math>(\yL,\yU,\ex)\sim\sP</math> be observable random variables and <math>\ey</math> be an unobservable random variable whose distribution (or features thereof) is of interest, with <math>\yL,\yU,\ey\in\cY</math>. | Let <math>(\yL,\yU,\ex)\sim\sP</math> be observable random variables and <math>\ey</math> be an unobservable random variable whose distribution (or features thereof) is of interest, with <math>\yL,\yU,\ey\in\cY</math>. | ||

Suppose that <math>(\yL,\yU,\ey)</math> are such that <math>\sR(\yL\le\ey\le\yU)=1</math>.<ref group="Notes" > | Suppose that <math>(\yL,\yU,\ey)</math> are such that <math>\sR(\yL\le\ey\le\yU)=1</math>.<ref group="Notes" ><span id="fn:missing_special_case_interval"/>In Identification [[#IP:bounds:mean:md |Problem]] the observable variables are <math>(\ey\ed,\ed,\ex)</math>, and <math>(\yL,\yU)</math> are determined as follows: <math>\yL=\ey\ed+y_0(1-\ed)</math>, <math>\yU=\ey\ed+y_1(1-\ed)</math>. For the analysis in Section [[#subsec:programme:eval |Treatment Effects with and without Instrumental Variables]], the data is <math>(\ey,\es,\ex)</math> and <math>\yL=\ey\one(\es=t)+y_0\one(\es\ne t)</math>, <math>\yU=\ey\one(\es=t)+y_1\one(\es\ne t)</math>. | ||

Hence, <math>\sP(\yL\le\ey\le\yU)=1</math> by construction.</ref> | Hence, <math>\sP(\yL\le\ey\le\yU)=1</math> by construction.</ref> | ||

In the absence of additional information, what can the researcher learn about features of <math>\sQ(\ey|\ex=x)</math>, the conditional distribution of <math>\ey</math> given <math>\ex=x</math>? | In the absence of additional information, what can the researcher learn about features of <math>\sQ(\ey|\ex=x)</math>, the conditional distribution of <math>\ey</math> given <math>\ex=x</math>? | ||

|}} | |||

It is immediate to obtain the sharp identification region | It is immediate to obtain the sharp identification region | ||

| Line 549: | Line 549: | ||

\end{align*} | \end{align*} | ||

</math> | </math> | ||

Then <math>\eY</math> is a random closed set according to [[guide:379e0dcd67#def:rcs |Definition]].<ref group="Notes" >For a proof of this statement, see | Then <math>\eY</math> is a random closed set according to [[guide:379e0dcd67#def:rcs |Definition]].<ref group="Notes" >For a proof of this statement, see {{ref|name=mol:mol18}}{{rp|at=Example 1.11}}.</ref> The requirement <math>\sR(\yL\le\ey\le\yU)=1</math> can be equivalently expressed as | ||

<math display="block"> | <math display="block"> | ||

| Line 560: | Line 560: | ||

In order to harness such information to characterize the set of observationally equivalent probability distributions for <math>\ey</math>, one can leverage a result due to <ref name="art83"></ref> (and <ref name="nor92"></ref>), reported in [[guide:379e0dcd67#thr:artstein |Theorem]] in [[guide:379e0dcd67#app:RCS |Appendix]], which allows one to translate \eqref{eq:y_in_Y} into a collection of conditional moment inequalities. | In order to harness such information to characterize the set of observationally equivalent probability distributions for <math>\ey</math>, one can leverage a result due to <ref name="art83"></ref> (and <ref name="nor92"></ref>), reported in [[guide:379e0dcd67#thr:artstein |Theorem]] in [[guide:379e0dcd67#app:RCS |Appendix]], which allows one to translate \eqref{eq:y_in_Y} into a collection of conditional moment inequalities. | ||

Specifically, let <math>\cT</math> denote the space of all probability measures with support in <math>\cY</math>. | Specifically, let <math>\cT</math> denote the space of all probability measures with support in <math>\cY</math>. | ||

{{proofcard|Theorem (Conditional Distribution of Interval-Observed Outcome Data)|SIR:CDF_id| | |||

Given <math>\tau\in\cT</math>, let <math>\tau_K(x)</math> denote the probability that distribution <math>\tau</math> assigns to set <math>K</math> conditional on <math>\ex=x</math>. | Given <math>\tau\in\cT</math>, let <math>\tau_K(x)</math> denote the probability that distribution <math>\tau</math> assigns to set <math>K</math> conditional on <math>\ex=x</math>. | ||

Under the assumptions in Identification [[#IP:interval_outcome |Problem]], the sharp identification region for <math>\sQ(\ey|\ex=x)</math> is | Under the assumptions in Identification [[#IP:interval_outcome |Problem]], the sharp identification region for <math>\sQ(\ey|\ex=x)</math> is | ||

| Line 577: | Line 576: | ||

\end{align} | \end{align} | ||

</math> | </math> | ||

| | |||

[[guide:379e0dcd67#thr:artstein |Theorem]] yields \eqref{eq:sharp_id_P_interval_1}. | [[guide:379e0dcd67#thr:artstein |Theorem]] yields \eqref{eq:sharp_id_P_interval_1}. | ||

If <math>\cY=[y_0,y_1]</math>, <ref name="mol:mol18"></ref>{{rp|at=Theorem 2.25}} show that it suffices to verify the inequalities in \eqref{eq:sharp_id_P_interval_2} for sets <math>K</math> that are intervals. | If <math>\cY=[y_0,y_1]</math>, <ref name="mol:mol18"></ref>{{rp|at=Theorem 2.25}} show that it suffices to verify the inequalities in \eqref{eq:sharp_id_P_interval_2} for sets <math>K</math> that are intervals. | ||

}} | |||

Compare equation \eqref{eq:sharp_id_P_interval_1} with equation \eqref{eq:sharp_id_P_md_Manski}. | Compare equation \eqref{eq:sharp_id_P_interval_1} with equation \eqref{eq:sharp_id_P_md_Manski}. | ||

Under the set-up of Identification [[#IP:bounds:mean:md |Problem]], when <math>\ed=1</math> we have <math>\eY=\{\ey\}</math> and when <math>\ed=0</math> we have <math>\eY=\cY</math>. | Under the set-up of Identification [[#IP:bounds:mean:md |Problem]], when <math>\ed=1</math> we have <math>\eY=\{\ey\}</math> and when <math>\ed=0</math> we have <math>\eY=\cY</math>. | ||

| Line 587: | Line 586: | ||

It follows that the characterizations in \eqref{eq:sharp_id_P_interval_1} and \eqref{eq:sharp_id_P_md_Manski} are equivalent. | It follows that the characterizations in \eqref{eq:sharp_id_P_interval_1} and \eqref{eq:sharp_id_P_md_Manski} are equivalent. | ||

If <math>\cY</math> is countable, it is easy to show that \eqref{eq:sharp_id_P_interval_1} simplifies to \eqref{eq:sharp_id_P_md_Manski} (see, e.g., <ref name="ber:mol:mol12"></ref>{{rp|at=Proposition 2.2}}). | If <math>\cY</math> is countable, it is easy to show that \eqref{eq:sharp_id_P_interval_1} simplifies to \eqref{eq:sharp_id_P_md_Manski} (see, e.g., <ref name="ber:mol:mol12"></ref>{{rp|at=Proposition 2.2}}). | ||

'''Key Insight (Random set theory and partial identification):'''<span id="big_idea:pi_and_rs"/><i>The mathematical framework for the analysis of random closed sets embodied in random set theory is naturally suited to conduct identification analysis and statistical inference in partially identified models. | |||

The mathematical framework for the analysis of random closed sets embodied in random set theory is naturally suited to conduct identification analysis and statistical inference in partially identified models. | |||

This is because, as argued by <ref name="ber:mol08"></ref> and <ref name="ber:mol:mol11"></ref><ref name="ber:mol:mol12"></ref>, lack of point identification can often be traced back to a collection of random variables that are consistent with the available data and maintained assumptions. | This is because, as argued by <ref name="ber:mol08"></ref> and <ref name="ber:mol:mol11"></ref><ref name="ber:mol:mol12"></ref>, lack of point identification can often be traced back to a collection of random variables that are consistent with the available data and maintained assumptions. | ||

In turn, this collection of random variables is equal to the family of selections of a properly specified random closed set, so that random set theory applies. | In turn, this collection of random variables is equal to the family of selections of a properly specified random closed set, so that random set theory applies. | ||

| Line 595: | Line 593: | ||

More examples are given throughout this chapter. | More examples are given throughout this chapter. | ||

As mentioned in the Introduction, the exercise of defining the random closed set that is relevant for the problem under consideration is routinely carried out in partial identification analysis, even when random set theory is not applied. | As mentioned in the Introduction, the exercise of defining the random closed set that is relevant for the problem under consideration is routinely carried out in partial identification analysis, even when random set theory is not applied. | ||

For example, in the case of treatment effect analysis with monotone response function, <ref name="man97:monotone"></ref> derived the set in the right-hand-side of \eqref{eq:RCS:MTR}, which satisfies Definition [[guide:379e0dcd67#def:rcs |def:rcs]]. | For example, in the case of treatment effect analysis with monotone response function, <ref name="man97:monotone"></ref> derived the set in the right-hand-side of \eqref{eq:RCS:MTR}, which satisfies Definition [[guide:379e0dcd67#def:rcs |def:rcs]].</i> | ||

An attractive feature of the characterization in \eqref{eq:sharp_id_P_interval_1} is that it holds regardless of the specific assumptions on <math>\yL,\,\yU</math>, and <math>\cY</math>. | An attractive feature of the characterization in \eqref{eq:sharp_id_P_interval_1} is that it holds regardless of the specific assumptions on <math>\yL,\,\yU</math>, and <math>\cY</math>. | ||

Later sections in this chapter illustrate how [[guide:379e0dcd67#thr:artstein |Theorem]] delivers the sharp identification region in other more complex instances of partial identification of probability distributions, as well as in structural models. | Later sections in this chapter illustrate how [[guide:379e0dcd67#thr:artstein |Theorem]] delivers the sharp identification region in other more complex instances of partial identification of probability distributions, as well as in structural models. | ||

| Line 612: | Line 610: | ||

This is because each <math>\ey_\eu</math> is a (stochastic) convex combination of <math>\yL,\yU</math>, hence each of these random variables satisfies <math>\sR(\yL\le\ey_\eu\le\yU)=1</math>. | This is because each <math>\ey_\eu</math> is a (stochastic) convex combination of <math>\yL,\yU</math>, hence each of these random variables satisfies <math>\sR(\yL\le\ey_\eu\le\yU)=1</math>. | ||

While such characterization is sharp, it can be of difficult implementation in practice, because it requires working with all possible random variables <math>\ey_\eu</math> built using all possible random variables <math>\eu</math> with support in <math>[0,1]</math>. | While such characterization is sharp, it can be of difficult implementation in practice, because it requires working with all possible random variables <math>\ey_\eu</math> built using all possible random variables <math>\eu</math> with support in <math>[0,1]</math>. | ||

[[guide:379e0dcd67#thr:artstein |Theorem]] allows one to bypass the use of <math>\eu</math>, and obtain directly a characterization of the sharp identification region for <math>\sQ(\ey|\ex=x)</math> based on conditional moment inequalities.<ref group="Notes" >It can be shown that the collection of random variables <math>\ey_\eu</math> equals the collection of ''measurable selections'' of the random closed set <math>\eY\equiv [\yL,\yU]</math> (see [[guide:379e0dcd67#def:selection |Definition]]); see | [[guide:379e0dcd67#thr:artstein |Theorem]] allows one to bypass the use of <math>\eu</math>, and obtain directly a characterization of the sharp identification region for <math>\sQ(\ey|\ex=x)</math> based on conditional moment inequalities.<ref group="Notes" >It can be shown that the collection of random variables <math>\ey_\eu</math> equals the collection of ''measurable selections'' of the random closed set <math>\eY\equiv [\yL,\yU]</math> (see [[guide:379e0dcd67#def:selection |Definition]]); see {{ref|name=ber:mol:mol11}}{{rp|at=Lemma 2.1}}. | ||

[[guide:379e0dcd67#thr:artstein |Theorem]] provides a characterization of the distribution of any <math>\ey_\eu</math> that satisfies <math>\ey_\eu \in \eY</math> a.s., based on a dominance condition that relates the distribution of <math>\ey_\eu</math> to the distribution of the random set <math>\eY</math>. | [[guide:379e0dcd67#thr:artstein |Theorem]] provides a characterization of the distribution of any <math>\ey_\eu</math> that satisfies <math>\ey_\eu \in \eY</math> a.s., based on a dominance condition that relates the distribution of <math>\ey_\eu</math> to the distribution of the random set <math>\eY</math>. | ||

Such dominance condition is given by the inequalities in \eqref{eq:sharp_id_P_interval_1}. | Such dominance condition is given by the inequalities in \eqref{eq:sharp_id_P_interval_1}. | ||

| Line 620: | Line 618: | ||

For the case of interval covariate data, <ref name="man:tam02"></ref> provide a set of sufficient conditions under which simple and elegant sharp bounds on functionals of <math>\sQ(\ey|\ex)</math> can be obtained, even in this substantially harder identification problem. | For the case of interval covariate data, <ref name="man:tam02"></ref> provide a set of sufficient conditions under which simple and elegant sharp bounds on functionals of <math>\sQ(\ey|\ex)</math> can be obtained, even in this substantially harder identification problem. | ||

Their assumptions are listed in Identification [[#IP:interval_covariate |Problem]], and their result (with proof) in Theorem [[#SIR:man:tam:nonpar |SIR-]]. | Their assumptions are listed in Identification [[#IP:interval_covariate |Problem]], and their result (with proof) in Theorem [[#SIR:man:tam:nonpar |SIR-]]. | ||

{{proofcard|Identification Problem (Interval Covariate Data)|IP:interval_covariate| | |||

Let <math>(\ey,\xL,\xU)\sim\sP</math> be observable random variables in <math>\R\times\R\times\R</math> and <math>\ex\in\R</math> be an unobservable random variable. | Let <math>(\ey,\xL,\xU)\sim\sP</math> be observable random variables in <math>\R\times\R\times\R</math> and <math>\ex\in\R</math> be an unobservable random variable. | ||

Suppose that <math>\sR</math>, the joint distribution of <math>(\ey,\ex,\xL,\xU)</math>, is such that: (I) <math>\sR(\xL\le\ex\le\xU)=1</math>; (M) <math>\E_\sQ(\ey|\ex=x)</math> is weakly increasing in <math>x</math>; and (MI) <math>\E_{\sR}(\ey|\ex,\xL,\xU)=\E_\sQ(\ey|\ex)</math>. | Suppose that <math>\sR</math>, the joint distribution of <math>(\ey,\ex,\xL,\xU)</math>, is such that: (I) <math>\sR(\xL\le\ex\le\xU)=1</math>; (M) <math>\E_\sQ(\ey|\ex=x)</math> is weakly increasing in <math>x</math>; and (MI) <math>\E_{\sR}(\ey|\ex,\xL,\xU)=\E_\sQ(\ey|\ex)</math>. | ||

In the absence of additional information, what can the researcher learn about <math>\E_\sQ(\ey|\ex=x)</math> for given <math>x\in\cX</math>? | In the absence of additional information, what can the researcher learn about <math>\E_\sQ(\ey|\ex=x)</math> for given <math>x\in\cX</math>? | ||

|}} | |||

Compared to the earlier discussion for the interval outcome case, here there are two additional assumptions. | Compared to the earlier discussion for the interval outcome case, here there are two additional assumptions. | ||

The monotonicity condition (M) is a simple shape restrictions, which however requires some prior knowledge about the joint distribution of <math>(\ey,\ex)</math>. | The monotonicity condition (M) is a simple shape restrictions, which however requires some prior knowledge about the joint distribution of <math>(\ey,\ex)</math>. | ||

The mean independence restriction (MI) requires that if <math>\ex</math> were observed, knowledge of <math>(\xL,\xU)</math> would not affect the conditional expectation of <math>\ey|\ex</math>. | The mean independence restriction (MI) requires that if <math>\ex</math> were observed, knowledge of <math>(\xL,\xU)</math> would not affect the conditional expectation of <math>\ey|\ex</math>. | ||

The assumption is not innocuous, as pointed out by the authors. | The assumption is not innocuous, as pointed out by the authors. | ||

For example, it may fail if censoring is endogenous.<ref group="Notes" > | For example, it may fail if censoring is endogenous.<ref group="Notes" ><span id="foot:auc:bug:hot17"/>For the case of missing covariate data, which is a special case of interval covariate data similarly to arguments in [[#fn:missing_special_case_interval |footnote]], {{ref|name=auc:bug:hot17}} show that the MI restriction implies the assumption that data is missing at random.</ref> | ||

{{proofcard|Theorem (Conditional Expectation with Interval-Observed Covariate Data)|SIR:man:tam:nonpar| | |||

Under the assumptions of Identification [[#IP:interval_covariate |Problem]], the sharp identification region for <math>\E_\sQ(\ey|\ex=x)</math> for given <math>x\in\cX</math> is | Under the assumptions of Identification [[#IP:interval_covariate |Problem]], the sharp identification region for <math>\E_\sQ(\ey|\ex=x)</math> for given <math>x\in\cX</math> is | ||

| Line 642: | Line 638: | ||

\end{align} | \end{align} | ||

</math> | </math> | ||

| | |||

The law of iterated expectations and the independence assumption yield <math>\E_\sP(\ey|\xL,\xU)=\int \E_\sQ(\ey|\ex)d\sR(\ex|\xL,\xU)</math>. | The law of iterated expectations and the independence assumption yield <math>\E_\sP(\ey|\xL,\xU)=\int \E_\sQ(\ey|\ex)d\sR(\ex|\xL,\xU)</math>. | ||

For all <math>\underline{x}\le \bar{x}</math>, the monotonicity assumption and the fact that <math>\ex\in[\xL,\xU]</math>-a.s. yield <math>\E_\sQ(\ey|\ex=\underline{x})\le \int \E_\sQ(\ey|\ex)d\sR(\ex|\xL=\underline{x},\xU=\bar{x}) \le \E_\sQ(\ey|\ex=\bar{x})</math>. | For all <math>\underline{x}\le \bar{x}</math>, the monotonicity assumption and the fact that <math>\ex\in[\xL,\xU]</math>-a.s. yield <math>\E_\sQ(\ey|\ex=\underline{x})\le \int \E_\sQ(\ey|\ex)d\sR(\ex|\xL=\underline{x},\xU=\bar{x}) \le \E_\sQ(\ey|\ex=\bar{x})</math>. | ||

| Line 650: | Line 646: | ||

The bound is weakly increasing as a function of <math>x</math>, so that the monotonicity assumption on <math>\E_\sQ(\ey|\ex=x)</math> holds and the bound is sharp. | The bound is weakly increasing as a function of <math>x</math>, so that the monotonicity assumption on <math>\E_\sQ(\ey|\ex=x)</math> holds and the bound is sharp. | ||

The argument for the upper bound can be concluded similarly. | The argument for the upper bound can be concluded similarly. | ||

}} | |||

Learning about functionals of <math>\sQ(\ey|\ex=x)</math> naturally implies learning about predictors of <math>\ey|\ex=x</math>. | Learning about functionals of <math>\sQ(\ey|\ex=x)</math> naturally implies learning about predictors of <math>\ey|\ex=x</math>. | ||

For example, <math>\idr{\E_\sQ(\ey|\ex=x)}</math> yields the collection of values for the best predictor under square loss; | For example, <math>\idr{\E_\sQ(\ey|\ex=x)}</math> yields the collection of values for the best predictor under square loss; | ||

| Line 662: | Line 658: | ||

<ref name="man03"></ref>{{rp|at=pp. 56-58}} discusses what can be learned about the best linear predictor of <math>\ey</math> conditional on <math>\ex</math>, when only interval data on <math>(\ey,\ex)</math> is available. | <ref name="man03"></ref>{{rp|at=pp. 56-58}} discusses what can be learned about the best linear predictor of <math>\ey</math> conditional on <math>\ex</math>, when only interval data on <math>(\ey,\ex)</math> is available. | ||

I treat first the case of interval outcome and perfectly observed covariates. | I treat first the case of interval outcome and perfectly observed covariates. | ||

{{proofcard|Identification Problem (Parametric Prediction with Interval Outcome Data)|IP:param_pred_interval|Maintain the same assumptions as in Identification [[#IP:interval_outcome |Problem]]. | |||

Maintain the same assumptions as in Identification [[#IP:interval_outcome |Problem]]. | |||

Let <math>(\yL,\yU,\ex)\sim\sP</math> be observable random variables and <math>\ey</math> be an unobservable random variable, with <math>\sR(\yL\le\ey\le\yU)=1</math>. | Let <math>(\yL,\yU,\ex)\sim\sP</math> be observable random variables and <math>\ey</math> be an unobservable random variable, with <math>\sR(\yL\le\ey\le\yU)=1</math>. | ||

In the absence of additional information, what can the researcher learn about the best linear predictor of <math>\ey</math> given <math>\ex=x</math>? | In the absence of additional information, what can the researcher learn about the best linear predictor of <math>\ey</math> given <math>\ex=x</math>? | ||

|}} | |||

For simplicity suppose that <math>\ex</math> is a scalar, and let <math>\theta=[\theta_0~\theta_1]^\top\in\Theta\subset\R^2</math> denote the parameter vector of the best linear predictor of <math>\ey|\ex</math>. | For simplicity suppose that <math>\ex</math> is a scalar, and let <math>\theta=[\theta_0~\theta_1]^\top\in\Theta\subset\R^2</math> denote the parameter vector of the best linear predictor of <math>\ey|\ex</math>. | ||

Assume that <math>Var(\ex) > 0</math>. | Assume that <math>Var(\ex) > 0</math>. | ||

| Line 707: | Line 701: | ||

</math> | </math> | ||

where <math>\Sel(\eY)</math> is the set of all measurable selections from <math>\eY</math>, see [[guide:379e0dcd67#def:selection |Definition]]. Then, | where <math>\Sel(\eY)</math> is the set of all measurable selections from <math>\eY</math>, see [[guide:379e0dcd67#def:selection |Definition]]. Then, | ||

{{proofcard|Theorem (Best Linear Predictor with Interval Outcome Data)|SIR:BLP_intervalY| | |||

Under the assumptions of Identification [[#IP:param_pred_interval |Problem]], the sharp identification region for the parameters of the best linear predictor of <math>\ey|\ex</math> is | Under the assumptions of Identification [[#IP:param_pred_interval |Problem]], the sharp identification region for the parameters of the best linear predictor of <math>\ey|\ex</math> is | ||

| Line 718: | Line 711: | ||

</math> | </math> | ||

with <math>\E_\sP\eG</math> the Aumann (or selection) expectation of <math>\eG</math> as in [[guide:379e0dcd67#def:sel-exp |Definition]]. | with <math>\E_\sP\eG</math> the Aumann (or selection) expectation of <math>\eG</math> as in [[guide:379e0dcd67#def:sel-exp |Definition]]. | ||

| | |||

By [[guide:379e0dcd67#thr:artstein |Theorem]], <math>(\tilde\ey,\tilde\ex)\in(\eY\times\ex)</math> (up to an ordered coupling as discussed in [[guide:379e0dcd67#app:RCS |Appendix]]), if and only if the distribution of <math>(\tilde\ey,\tilde\ex)</math> belongs to <math>\idr{\sQ(\ey,\ex)}</math>. | By [[guide:379e0dcd67#thr:artstein |Theorem]], <math>(\tilde\ey,\tilde\ex)\in(\eY\times\ex)</math> (up to an ordered coupling as discussed in [[guide:379e0dcd67#app:RCS |Appendix]]), if and only if the distribution of <math>(\tilde\ey,\tilde\ex)</math> belongs to <math>\idr{\sQ(\ey,\ex)}</math>. | ||

The result follows. | The result follows. | ||

}} | |||

In either representation \eqref{eq:manski_blp} or \eqref{eq:ThetaI_BLP}, <math>\idr{\theta}</math> is the collection of best linear predictors for each selection of <math>\eY</math>.<ref group="Notes" >Under our assumption that <math>\cY</math> is a bounded interval, all the selections of <math>\eY</math> are integrable. | In either representation \eqref{eq:manski_blp} or \eqref{eq:ThetaI_BLP}, <math>\idr{\theta}</math> is the collection of best linear predictors for each selection of <math>\eY</math>.<ref group="Notes" >Under our assumption that <math>\cY</math> is a bounded interval, all the selections of <math>\eY</math> are integrable. {{ref|name=ber:mol08}} consider the more general case where <math>\cY</math> is not required to be bounded.</ref> | ||

Why should one bother with the representation in \eqref{eq:ThetaI_BLP}? | Why should one bother with the representation in \eqref{eq:ThetaI_BLP}? | ||

The reason is that <math>\idr{\theta}</math> is a convex set, as it can be evinced from representation \eqref{eq:ThetaI_BLP}: <math>\eG</math> has almost surely convex realizations that are segments and the Aumann expectation of a convex set is convex.<ref group="Notes" >In <math>\R^2</math> in our example, in <math>\R^d</math> if <math>\ex</math> is a <math>d-1</math> vector and the predictor includes an intercept.</ref> | The reason is that <math>\idr{\theta}</math> is a convex set, as it can be evinced from representation \eqref{eq:ThetaI_BLP}: <math>\eG</math> has almost surely convex realizations that are segments and the Aumann expectation of a convex set is convex.<ref group="Notes" >In <math>\R^2</math> in our example, in <math>\R^d</math> if <math>\ex</math> is a <math>d-1</math> vector and the predictor includes an intercept.</ref> | ||

| Line 734: | Line 727: | ||

\end{align} | \end{align} | ||

</math> | </math> | ||

where <math>f(\ex,u)\equiv [1~\ex]\Sigma_\sP^{-1}u</math>.<ref group="Notes" >See | where <math>f(\ex,u)\equiv [1~\ex]\Sigma_\sP^{-1}u</math>.<ref group="Notes" >See {{ref|name=ber:mol08}}{{rp|at=p. 808}} and {{ref|name=bon:mag:mau12}}{{rp|at=p. 1136}}.</ref> | ||

The characterization in \eqref{eq:supfun:BLP} results from [[guide:379e0dcd67#thr:exp-supp |Theorem]], which yields <math>h_{\idr{\theta}}(u)=h_{\Sigma_\sP^{-1} \E_\sP\eG}(u)=\E_\sP h_{\Sigma_\sP^{-1} \eG}(u)</math>, and the fact that <math>\E_\sP h_{\Sigma_\sP^{-1} \eG}(u)</math> equals the expression in \eqref{eq:supfun:BLP}. | The characterization in \eqref{eq:supfun:BLP} results from [[guide:379e0dcd67#thr:exp-supp |Theorem]], which yields <math>h_{\idr{\theta}}(u)=h_{\Sigma_\sP^{-1} \E_\sP\eG}(u)=\E_\sP h_{\Sigma_\sP^{-1} \eG}(u)</math>, and the fact that <math>\E_\sP h_{\Sigma_\sP^{-1} \eG}(u)</math> equals the expression in \eqref{eq:supfun:BLP}. | ||

As I discuss in [[guide:6d1a428897#sec:inference |Section]] below, because the support function fully characterizes the boundary of <math>\idr{\theta}</math>, \eqref{eq:supfun:BLP} allows for a simple sample analog estimator, and for inference procedures with desirable properties. | As I discuss in [[guide:6d1a428897#sec:inference |Section]] below, because the support function fully characterizes the boundary of <math>\idr{\theta}</math>, \eqref{eq:supfun:BLP} allows for a simple sample analog estimator, and for inference procedures with desirable properties. | ||

It also immediately yields sharp bounds on linear combinations of <math>\theta</math> by judicious choice of <math>u</math>.<ref group="Notes" >For example, in the case that <math>\ex</math> is a scalar, sharp bounds on <math>\theta_1</math> can be obtained by choosing <math>u=[0~1]^\top</math> and <math>u=[0~-1]^\top</math>, which yield <math>\theta_1\in[\theta_{1L},\theta_{1U}]</math> with <math>\theta_{1L}=\min_{\ey\in[\yL,\yU]}\frac{Cov(\ex,\ey)}{Var(\ex)}=\frac{\E_\sP[(\ex-\E_\sP\ex)(\yL\one(\ex > \E_\sP\ex)+\yU\one(\ex\le\E\ex))]}{\E_\sP\ex^2-(\E_\sP\ex)^2}</math> and <math>\theta_{1U}=\max_{\ey\in[\yL,\yU]}\frac{Cov(\ex,\ey)}{Var(\ex)}=\frac{\E_\sP[(\ex-\E_\sP\ex)(\yL\one(\ex < \E_\sP\ex)+\yU\one(\ex\ge\E\ex))]}{\E_\sP\ex^2-(\E_\sP\ex)^2}</math>.</ref> | It also immediately yields sharp bounds on linear combinations of <math>\theta</math> by judicious choice of <math>u</math>.<ref group="Notes" >For example, in the case that <math>\ex</math> is a scalar, sharp bounds on <math>\theta_1</math> can be obtained by choosing <math>u=[0~1]^\top</math> and <math>u=[0~-1]^\top</math>, which yield <math>\theta_1\in[\theta_{1L},\theta_{1U}]</math> with <math>\theta_{1L}=\min_{\ey\in[\yL,\yU]}\frac{Cov(\ex,\ey)}{Var(\ex)}=\frac{\E_\sP[(\ex-\E_\sP\ex)(\yL\one(\ex > \E_\sP\ex)+\yU\one(\ex\le\E\ex))]}{\E_\sP\ex^2-(\E_\sP\ex)^2}</math> and <math>\theta_{1U}=\max_{\ey\in[\yL,\yU]}\frac{Cov(\ex,\ey)}{Var(\ex)}=\frac{\E_\sP[(\ex-\E_\sP\ex)(\yL\one(\ex < \E_\sP\ex)+\yU\one(\ex\ge\E\ex))]}{\E_\sP\ex^2-(\E_\sP\ex)^2}</math>.</ref> | ||

<ref name="sto07"></ref> and <ref name="mag:mau08"></ref> provide the same characterization as in \eqref{eq:supfun:BLP} using, respectively, direct optimization and the Frisch-Waugh-Lovell theorem. | <ref name="sto07"></ref> and <ref name="mag:mau08"></ref> provide the same characterization as in \eqref{eq:supfun:BLP} using, respectively, direct optimization and the Frisch-Waugh-Lovell theorem. | ||

A natural generalization of Identification [[#IP:param_pred_interval |Problem]] allows for both outcome and covariate data to be interval valued. | A natural generalization of Identification [[#IP:param_pred_interval |Problem]] allows for both outcome and covariate data to be interval valued. | ||

{{proofcard|Identification Problem (Parametric Prediction with Interval Outcome and Covariate Data)|IP:param_pred_interval_out_cov| | |||

Maintain the same assumptions as in Identification [[#IP:param_pred_interval |Problem]], but with <math>\ex\in\cX\subset\R</math> unobservable. | Maintain the same assumptions as in Identification [[#IP:param_pred_interval |Problem]], but with <math>\ex\in\cX\subset\R</math> unobservable. | ||

Let the researcher observe <math>(\yL,\yU,\xL,\xU)</math> such that <math>\sR(\yL \leq \ey \leq \yU , \xL \leq \ex \leq \xU)=1</math>. | Let the researcher observe <math>(\yL,\yU,\xL,\xU)</math> such that <math>\sR(\yL \leq \ey \leq \yU , \xL \leq \ex \leq \xU)=1</math>. | ||

Let <math>\eX\equiv [\xL,\xU]</math> and let <math>\cX</math> be bounded. | Let <math>\eX\equiv [\xL,\xU]</math> and let <math>\cX</math> be bounded. | ||

In the absence of additional information, what can the researcher learn about the best linear predictor of <math>\ey</math> given <math>\ex=x</math>? | In the absence of additional information, what can the researcher learn about the best linear predictor of <math>\ey</math> given <math>\ex=x</math>? | ||

|}} | |||

Abstractly, <math>\idr{\theta}</math> is as given in \eqref{eq:manski_blp}, with | Abstractly, <math>\idr{\theta}</math> is as given in \eqref{eq:manski_blp}, with | ||

| Line 762: | Line 754: | ||

How can this basic observation help in the case of interval data? | How can this basic observation help in the case of interval data? | ||

The idea is that one can use the same insight applied to the set-valued data, and obtain <math>\idr{\theta}</math> as the collection of <math>\theta</math>'s for which there exists a selection <math>(\tilde{\ey},\tilde{\ex}) \in \Sel(\eY \times \eX)</math>, and associated prediction error <math>\eps_\theta=\tilde{\ey}-\theta_0-\theta_1 \tilde{\ex}</math>, satisfying <math>\E_\sP \eps_\theta=0</math> and <math>\E_\sP (\eps_\theta \tilde{\ex})=0</math> (as shown by <ref name="ber:mol:mol11"></ref>).<ref group="Notes" >Here for simplicity I suppose that both <math>\xL</math> and <math>\xU</math> have bounded support. | The idea is that one can use the same insight applied to the set-valued data, and obtain <math>\idr{\theta}</math> as the collection of <math>\theta</math>'s for which there exists a selection <math>(\tilde{\ey},\tilde{\ex}) \in \Sel(\eY \times \eX)</math>, and associated prediction error <math>\eps_\theta=\tilde{\ey}-\theta_0-\theta_1 \tilde{\ex}</math>, satisfying <math>\E_\sP \eps_\theta=0</math> and <math>\E_\sP (\eps_\theta \tilde{\ex})=0</math> (as shown by <ref name="ber:mol:mol11"></ref>).<ref group="Notes" >Here for simplicity I suppose that both <math>\xL</math> and <math>\xU</math> have bounded support. | ||

{{ref|name=ber:mol:mol11}} do not make this simplifying assumption.</ref> | |||

To obtain the formal result, define the <math>\theta</math>-dependent set<ref group="Notes" >Note that while <math>\eG</math> is a convex set, <math>\Eps_\theta</math> is not.</ref> | To obtain the formal result, define the <math>\theta</math>-dependent set<ref group="Notes" >Note that while <math>\eG</math> is a convex set, <math>\Eps_\theta</math> is not.</ref> | ||

| Line 769: | Line 761: | ||

(\tilde{\ey}-\theta_0-\theta_1 \tilde{\ex})\tilde{\ex} | (\tilde{\ey}-\theta_0-\theta_1 \tilde{\ex})\tilde{\ex} | ||

\end{pmatrix} \: : \, (\tilde{\ey},\tilde{\ex}) \in \Sel(\eY \times\eX) \right\rbrace. </math> | \end{pmatrix} \: : \, (\tilde{\ey},\tilde{\ex}) \in \Sel(\eY \times\eX) \right\rbrace. </math> | ||

{{proofcard|Theorem (Best Linear Predictor with Interval Outcome and Covariate Data)|SIR:blp_intervalYX|Under the assumptions of Identification [[#IP:param_pred_interval_out_cov |Problem]], the sharp identification region for the parameters of the best linear predictor of <math>\ey|\ex</math> is | |||

Under the assumptions of Identification [[#IP:param_pred_interval_out_cov |Problem]], the sharp identification region for the parameters of the best linear predictor of <math>\ey|\ex</math> is | |||

<math display="block"> | <math display="block"> | ||

| Line 781: | Line 772: | ||

\end{align} | \end{align} | ||

</math> | </math> | ||

where <math>h_{\Eps_\theta}(u) = \max_{y\in\eY,x\in\eX} [u_1(y-\theta_0-\theta_1 x)+ u_2(yx-\theta_0 x-\theta_1 x^2)]</math> is the support function of the set <math>\Eps_\theta</math> in direction <math>u\in\Sphere</math>, see [[guide:379e0dcd67#def:sup-fun |Definition]]. | where <math>h_{\Eps_\theta}(u) = \max_{y\in\eY,x\in\eX} [u_1(y-\theta_0-\theta_1 x)+ u_2(yx-\theta_0 x-\theta_1 x^2)]</math> is the support function of the set <math>\Eps_\theta</math> in direction <math>u\in\Sphere</math>, see [[guide:379e0dcd67#def:sup-fun |Definition]].|By [[guide:379e0dcd67#thr:artstein |Theorem]], <math>(\tilde\ey,\tilde\ex)\in(\eY\times\eX)</math> (up to an ordered coupling as discussed in [[guide:379e0dcd67#app:RCS |Appendix]]), if and only if the distribution of <math>(\tilde\ey,\tilde\ex)</math> belongs to <math>\idr{\sQ(\ey,\ex)}</math>. | ||

By [[guide:379e0dcd67#thr:artstein |Theorem]], <math>(\tilde\ey,\tilde\ex)\in(\eY\times\eX)</math> (up to an ordered coupling as discussed in [[guide:379e0dcd67#app:RCS |Appendix]]), if and only if the distribution of <math>(\tilde\ey,\tilde\ex)</math> belongs to <math>\idr{\sQ(\ey,\ex)}</math>. | |||

For given <math>\theta</math>, one can find <math>(\tilde\ey,\tilde\ex)\in(\eY\times\eX)</math> such that <math>\E_\sP \eps_\theta=0</math> and <math>\E_\sP (\eps_\theta \tilde{\ex})=0</math> with <math>\eps_\theta\in\Eps_\theta</math> if and only if the zero vector belongs to <math>\E_\sP \Eps_\theta</math>. | For given <math>\theta</math>, one can find <math>(\tilde\ey,\tilde\ex)\in(\eY\times\eX)</math> such that <math>\E_\sP \eps_\theta=0</math> and <math>\E_\sP (\eps_\theta \tilde{\ex})=0</math> with <math>\eps_\theta\in\Eps_\theta</math> if and only if the zero vector belongs to <math>\E_\sP \Eps_\theta</math>. | ||

By [[guide:379e0dcd67#thr:exp-supp |Theorem]], <math>\E_\sP \Eps_\theta</math> is a convex set and by [[guide:379e0dcd67#eq:dom_Aumann |eq:dom_Aumann]], <math>\mathbf{0} \in \E_\sP \Eps_\theta</math> if and only if <math>0 \leq h_{\E_\sP \Eps_\theta}(u) \,\forall \, u \in \Ball</math>. | By [[guide:379e0dcd67#thr:exp-supp |Theorem]], <math>\E_\sP \Eps_\theta</math> is a convex set and by [[guide:379e0dcd67#eq:dom_Aumann |eq:dom_Aumann]], <math>\mathbf{0} \in \E_\sP \Eps_\theta</math> if and only if <math>0 \leq h_{\E_\sP \Eps_\theta}(u) \,\forall \, u \in \Ball</math>. | ||

The final characterization follows from [[guide:379e0dcd67#eq:supf |eq:supf]]. | The final characterization follows from [[guide:379e0dcd67#eq:supf |eq:supf]].}} | ||

The support function <math>h_{\Eps_\theta}(u)</math> is an easy to calculate convex sublinear function of <math>u</math>, regardless of whether the variables involved are continuous or discrete. | The support function <math>h_{\Eps_\theta}(u)</math> is an easy to calculate convex sublinear function of <math>u</math>, regardless of whether the variables involved are continuous or discrete. | ||

The optimization problem in ([[#eq:ThetaI:BLP |eq:ThetaI:BLP]]), determining whether <math>\theta \in \idr{\theta}</math>, is a convex program, hence easy to solve. | The optimization problem in ([[#eq:ThetaI:BLP |eq:ThetaI:BLP]]), determining whether <math>\theta \in \idr{\theta}</math>, is a convex program, hence easy to solve. | ||

| Line 798: | Line 786: | ||

<ref name="man:tam02"></ref> study identification of parametric regression models under the assumptions in Identification [[#IP:man:tam02_param |Problem]]; Theorem [[#SIR:man:tam02_param |SIR-]] below reports the result. | <ref name="man:tam02"></ref> study identification of parametric regression models under the assumptions in Identification [[#IP:man:tam02_param |Problem]]; Theorem [[#SIR:man:tam02_param |SIR-]] below reports the result. | ||

The proof is omitted because it follows immediately from the proof of Theorem [[#SIR:man:tam:nonpar |SIR-]]. | The proof is omitted because it follows immediately from the proof of Theorem [[#SIR:man:tam:nonpar |SIR-]]. | ||

{{proofcard|Identification Problem (Parametric Regression with Interval Covariate Data)|IP:man:tam02_param|Let <math>(\ey,\xL,\xU,\ew)\sim\sP</math> be observable random variables in <math>\R\times\R\times\R\times\R^d</math>, <math>d < \infty</math>, and let <math>\ex\in\R</math> be an unobservable random variable. | |||

Let <math>(\ey,\xL,\xU,\ew)\sim\sP</math> be observable random variables in <math>\R\times\R\times\R\times\R^d</math>, <math>d < \infty</math>, and let <math>\ex\in\R</math> be an unobservable random variable. | |||

Assume that the joint distribution <math>\sR</math> of <math>(\ey,\ex,\xL,\xU)</math> is such that <math>\sR(\xL\le\ex\le\xU)=1</math> and <math>\E_{\sR}(\ey|\ew,\ex,\xL,\xU)=\E_\sQ(\ey|\ew,\ex)</math>. | Assume that the joint distribution <math>\sR</math> of <math>(\ey,\ex,\xL,\xU)</math> is such that <math>\sR(\xL\le\ex\le\xU)=1</math> and <math>\E_{\sR}(\ey|\ew,\ex,\xL,\xU)=\E_\sQ(\ey|\ew,\ex)</math>. | ||

Suppose that <math>\E_\sQ(\ey|\ew,\ex)=f(\ew,\ex;\theta)</math>, with <math>f:\R^d\times\R\times\Theta \mapsto \R</math> a known function such that for each <math>w\in\R</math> and <math>\theta\in\Theta</math>, <math>f(w,x;\theta)</math> is weakly increasing in <math>x</math>. | Suppose that <math>\E_\sQ(\ey|\ew,\ex)=f(\ew,\ex;\theta)</math>, with <math>f:\R^d\times\R\times\Theta \mapsto \R</math> a known function such that for each <math>w\in\R</math> and <math>\theta\in\Theta</math>, <math>f(w,x;\theta)</math> is weakly increasing in <math>x</math>. In the absence of additional information, what can the researcher learn about <math>\theta</math>?|}} | ||

In the absence of additional information, what can the researcher learn about <math>\theta</math>? | |||

{{proofcard|Theorem (Parametric Regression with Interval Covariate Data)|SIR:man:tam02_param|Under the Assumptions of Identification [[#IP:man:tam02_param |Problem]], the sharp identification region for <math>\theta</math> is | |||

Under the Assumptions of Identification [[#IP:man:tam02_param |Problem]], the sharp identification region for <math>\theta</math> is | |||

<math display="block"> | <math display="block"> | ||

| Line 814: | Line 797: | ||

\idr{\theta}=\big\{\vartheta\in \Theta: f(\ew,\xL;\vartheta)\le \E_\sP(\ey|\ew,\xL,\xU) \le f(\ew,\xU;\vartheta),~(\ew,\xL,\xU)\text{-a.s.} \big\}.\label{eq:ThetaI_man:tam02_param} | \idr{\theta}=\big\{\vartheta\in \Theta: f(\ew,\xL;\vartheta)\le \E_\sP(\ey|\ew,\xL,\xU) \le f(\ew,\xU;\vartheta),~(\ew,\xL,\xU)\text{-a.s.} \big\}.\label{eq:ThetaI_man:tam02_param} | ||

\end{multline} | \end{multline} | ||

</math> | </math>|}} | ||

<ref name="auc:bug:hot17"></ref> study Identification [[#IP:man:tam02_param |Problem]] for the case of missing covariate data ''without'' imposing the mean independence restriction of <ref name="man:tam02"></ref> (Assumption MI in Identification [[#IP:interval_covariate |Problem]]). | <ref name="auc:bug:hot17"></ref> study Identification [[#IP:man:tam02_param |Problem]] for the case of missing covariate data ''without'' imposing the mean independence restriction of <ref name="man:tam02"></ref> (Assumption MI in Identification [[#IP:interval_covariate |Problem]]). | ||

As discussed in [[#foot:auc:bug:hot17 |footnote]], restriction MI is undesirable in this context because it implies the assumption that data are missing at random. | As discussed in [[#foot:auc:bug:hot17 |footnote]], restriction MI is undesirable in this context because it implies the assumption that data are missing at random. | ||

<ref name="auc:bug:hot17"></ref> characterize <math>\idr{\theta}</math> under the weaker assumptions, but face the problem that this characterization is usually too complex to compute or to use for inference. | <ref name="auc:bug:hot17"></ref> characterize <math>\idr{\theta}</math> under the weaker assumptions, but face the problem that this characterization is usually too complex to compute or to use for inference. | ||

They therefore provide outer regions that are easier to compute, and they show that these regions are informative and relatively easy to use. | They therefore provide outer regions that are easier to compute, and they show that these regions are informative and relatively easy to use. | ||

===<span id="subsec:meas_error"></span>Measurement Error and Data Combination=== | ===<span id="subsec:meas_error"></span>Measurement Error and Data Combination=== | ||

| Line 837: | Line 819: | ||

They apply the resulting sharp bounds to learn about school performance when the observed test scores may not be valid for all students. | They apply the resulting sharp bounds to learn about school performance when the observed test scores may not be valid for all students. | ||

<ref name="mol08"></ref> provides sharp bounds on the distribution of a misclassified outcome variable under an array of different assumptions on the extent and type of misclassification. | <ref name="mol08"></ref> provides sharp bounds on the distribution of a misclassified outcome variable under an array of different assumptions on the extent and type of misclassification. | ||

A completely different problem is that of data combination. | A completely different problem is that of data combination. | ||

Applied economists often face the problem that no single data set contains all the variables that are necessary to conduct inference on a population of interest. | Applied economists often face the problem that no single data set contains all the variables that are necessary to conduct inference on a population of interest. | ||

| Line 867: | Line 850: | ||

===<span id="subsec:applications_PIPD"></span>Further Theoretical Advances and Empirical Applications=== | ===<span id="subsec:applications_PIPD"></span>Further Theoretical Advances and Empirical Applications=== | ||

In order to discuss the partial identification approach to learning features of probability distributions in some level of detail while keeping this chapter to a manageable length, I have focused on a selection of papers. | In order to discuss the partial identification approach to learning features of probability distributions in some level of detail while keeping this chapter to a manageable length, I have focused on a selection of papers. | ||