guide:E54e650503: Difference between revisions

No edit summary |

mNo edit summary |

||

| (4 intermediate revisions by 2 users not shown) | |||

| Line 1: | Line 1: | ||

<div class="d-none"><math> | |||

\newcommand{\NA}{{\rm NA}} | |||

\newcommand{\mat}[1]{{\bf#1}} | |||

\newcommand{\exref}[1]{\ref{##1}} | |||

\newcommand{\secstoprocess}{\all} | |||

\newcommand{\NA}{{\rm NA}} | |||

\newcommand{\mathds}{\mathbb}</math></div> | |||

Having mastered permutations, we now consider combinations. Let <math>U</math> be a set with <math>n</math> | |||

elements; we want to count the number of distinct subsets of the set | |||

<math>U</math> that have exactly <math>j</math> elements. The empty set and the set <math>U</math> are considered to | |||

be subsets of <math>U</math>. The empty set is usually denoted by <math>\phi</math>. | |||

<span id="exam 3.7"/> | |||

'''Example''' | |||

Let <math>U = \{a,b,c\}</math>. The subsets of <math>U</math> are | |||

<math display="block"> | |||

\phi,\ \{a\},\ \{b\},\ \{c\},\ \{a,b\},\ \{a,c\},\ \{b,c\},\ \{a,b,c\}\ . | |||

</math> | |||

===Binomial Coefficients=== | |||

The number of distinct subsets with <math>j</math> elements that can be chosen from a set with | |||

<math>n</math> elements is denoted by <math>{n \choose j}</math>, and is pronounced “<math>n</math> choose <math>j</math>.” The | |||

number <math>n \choose j</math> is called a ''binomial coefficient.'' This | |||

terminology comes from an application to algebra which will be discussed later in this section. | |||

In the above example, there is one subset with no elements, three subsets with exactly | |||

1 element, three subsets with exactly 2 elements, and one subset with exactly 3 | |||

elements. Thus, <math>{3 \choose 0} = 1</math>, <math>{3 \choose 1} = 3</math>, <math>{3 \choose 2} = 3</math>, | |||

and <math>{3 \choose 3} = 1</math>. Note that there are <math>2^3 = 8</math> subsets in all. (We have | |||

already seen that a set with <math>n</math> elements has <math>2^n</math> subsets; (see [[exercise:233bb6143e|Exercise]]). It follows that | |||

<math display="block"> | |||

{3 \choose 0} + {3 \choose 1} + {3 \choose 2} + {3 \choose 3} = 2^3 = 8\ , | |||

</math> | |||

<math display="block"> | |||

{n \choose 0} = {n \choose n} = 1\ . | |||

</math> | |||

Assume that <math>n > 0</math>. Then, since there is only one way to choose a set with no | |||

elements and only one way to choose a set with <math>n</math> elements, the remaining values of | |||

<math>n \choose j</math> are determined by the following ''recurrence relation'': | |||

{{proofcard|Theorem|thm_3.6|For integers <math>n</math> and <math>j</math>, with <math>0 < j < n</math>, the binomial coefficients satisfy: | |||

<span id{{=}}"eq 3.3"/> | |||

<math display="block"> | |||

\begin{equation} {n \choose j} = {{n-1} \choose j} + {{n-1} \choose {j - 1}}\ . | |||

\label{eq 3.3} | |||

\end{equation} | |||

</math>|We wish to choose a subset of <math>j</math> elements. Choose an element <math>u</math> of <math>U</math>. | |||

Assume first that we do not want <math>u</math> in the subset. Then we must choose the | |||

<math>j</math> elements from a set of <math>n - 1</math> elements; this can be done in <math>{{n-1} \choose j}</math> | |||

ways. On the other hand, assume that we do want <math>u</math> in the subset. Then we must | |||

choose the other <math>j - 1</math> elements from the remaining <math>n - 1</math> elements of <math>U</math>; this can | |||

be done in <math>{{n-1} \choose {j - 1}}</math> ways. Since <math>u</math> is either in our subset or not, | |||

the number of ways that we can choose a subset of <math>j</math> elements is the sum of the | |||

number of subsets of <math>j</math> elements which have | |||

<math>u</math> as a member and the number which do not---this is what Equation \ref{eq 3.3} states.}} | |||

The binomial coefficient <math>n \choose j</math> is defined to be 0, if <math>j < 0</math> or if <math>j > n</math>. With | |||

this definition, the restrictions on <math>j</math> in [[#thm 3.6 |Theorem]] are unnecessary. | |||

===Pascal's Triangle=== | |||

The [[#eq 3.3 |relation]], together with the knowledge that | |||

<math display="block"> | |||

{n \choose 0} = {n \choose n }= 1\ , | |||

</math> | |||

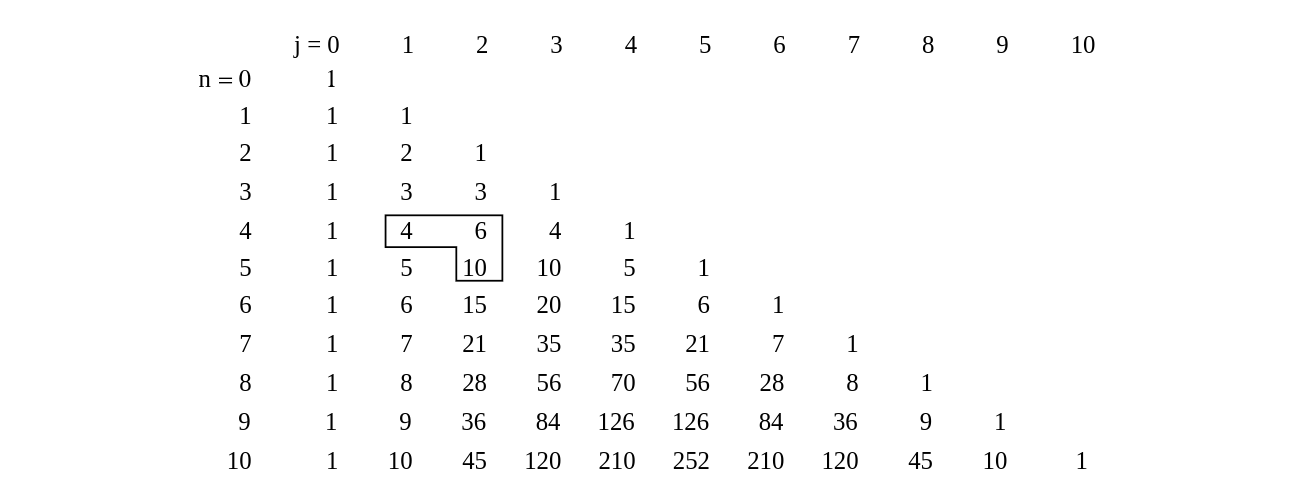

determines completely the numbers <math>n \choose j</math>. We can use these relations to | |||

determine the famous ''triangle of Pascal,'' which exhibits all these | |||

numbers in matrix form (see [[#fig 3.6|Figure]]). | |||

<div id="fig 3.6" class="d-flex justify-content-center"> | |||

[[File:guide_e6d15_PSfig3-6.png | 600px | thumb |Pascal's triangle.]] | |||

</div> | |||

The <math>n</math>th row of this triangle has the entries <math>n \choose 0</math>, <math>n \choose 1</math>,..., <math>n | |||

\choose n</math>. We know that the first and last of these numbers are 1. The remaining | |||

numbers are determined by the recurrence relation Equation \ref{eq 3.3}; that is, the | |||

entry <math>{n \choose j}</math> for <math>0 < j < n</math> in the <math>n</math>th row of Pascal's | |||

triangle is the ''sum'' of the entry immediately above and the one immediately to | |||

its left in the <math>(n - 1)</math>st row. For example, <math>{5 \choose 2} = 6 + 4 = 10</math>. | |||

This algorithm for constructing Pascal's triangle can be used to write a computer | |||

program to compute the binomial coefficients. You are asked to do this in [[exercise:2f7db43fe0 |Exercise]]. | |||

While Pascal's triangle provides a way to construct recursively the binomial | |||

coefficients, it is also possible to give a formula for <math>n \choose j</math>. | |||

{{proofcard|Theorem|thm_3.7|The binomial coefficients are given by the formula | |||

<span id{{=}}"eq 3.4"/> | |||

<math display="block"> | |||

\begin{equation} {n \choose j }= \frac{(n)_j}{j!}\ . | |||

\label{eq 3.4} | |||

\end{equation} | |||

</math>|Each subset of size <math>j</math> of a set of size <math>n</math> can be ordered in <math>j!</math> ways. Each | |||

of these orderings is a <math>j</math>-permutation of the set of size <math>n</math>. The number of | |||

<math>j</math>-permutations is <math>(n)_j</math>, so the number of subsets of size <math>j</math> is | |||

<math display="block"> | |||

\frac{(n)_j}{j!}\ . | |||

</math> | |||

This completes the proof.}} | |||

The above formula can be rewritten in the form | |||

<math display="block"> | |||

{n \choose j} = \frac{n!}{j!(n-j)!}\ . | |||

</math> | |||

This immediately shows that | |||

<math display="block"> | |||

{n \choose j} = {n \choose {n-j}}\ . | |||

</math> | |||

When using Equation \ref{eq 3.4} in the calculation of <math>{n \choose j}</math>, if one alternates | |||

the multiplications and divisions, then all of the intermediate values in the | |||

calculation are integers. Furthermore, none of these intermediate values exceed the | |||

final value. (See [[exercise:C2ad1f21c9 |Exercise]].) | |||

Another point that should be made concerning Equation \ref{eq 3.4} is that if it is | |||

used to ''define'' the binomial coefficients, then it is no longer necessary to require | |||

<math>n</math> to be a positive integer. The variable <math>j</math> must still be a non-negative integer under | |||

this definition. This idea is useful when extending the Binomial Theorem to general exponents. | |||

(The Binomial Theorem for non-negative integer exponents is given below as [[#thm 3.9 |Theorem]].) | |||

===Poker Hands=== | |||

<span id="exam 3.8"/> | |||

'''Example''' | |||

kind'' beats a ''full house.'' A poker hand is a random subset of 5 elements from | |||

a deck of 52 cards. A hand has four of a kind if it has four cards with the same | |||

value---for example, four sixes or four kings. It is a full house if it has three of | |||

one value and two of a second---for example, three twos and two queens. Let us see | |||

which hand is more likely. How many hands have four of a kind? There are 13 ways | |||

that we can specify the value for the four cards. For each of these, there are 48 | |||

possibilities for the fifth card. Thus, the number of four-of-a-kind hands is <math>13 | |||

\cdot 48 = 624</math>. Since the total number of possible hands is <math>{52 \choose 5} = | |||

2598960</math>, the probability of a hand with four of a kind is <math>624/2598960 = .00024</math>. | |||

Now consider the case of a full house; how many such hands are there? There are 13 | |||

choices for the value which occurs three times; for each of these there are | |||

<math>{4 \choose 3} = 4</math> choices for the particular three cards of this value that are in | |||

the hand. Having picked these three cards, there are 12 possibilities for the value | |||

which occurs twice; for each of these there are <math>{4 \choose 2} = 6</math> possibilities for | |||

the particular pair of this value. Thus, the number of full houses is <math>13 \cdot 4 | |||

\cdot 12 \cdot 6 = 3744</math>, and the probability of obtaining a hand with a full house is | |||

<math>3744/2598960 = .0014</math>. Thus, while both types of hands are unlikely, you are six | |||

times more likely to obtain a full house than four of a kind. | |||

===Bernoulli Trials=== | |||

Our principal use of the binomial coefficients will occur in the study of one of the | |||

important chance processes called ''Bernoulli trials.'' | |||

{{defncard|label=|id=def 3.4|A ''Bernoulli trials process'' is a sequence of | |||

<math>n</math> chance experiments such that | |||

<ul><li> Each experiment has two possible outcomes, which we may call ''success'' | |||

and ''failure.'' | |||

</li> | |||

<li> The probability <math>p</math> of success on each experiment is the same for each | |||

experiment, and this probability is not affected by any knowledge of previous | |||

outcomes. The probability <math>q</math> of failure is given by <math>q = 1 - p</math>. | |||

</li> | |||

</ul>}} | |||

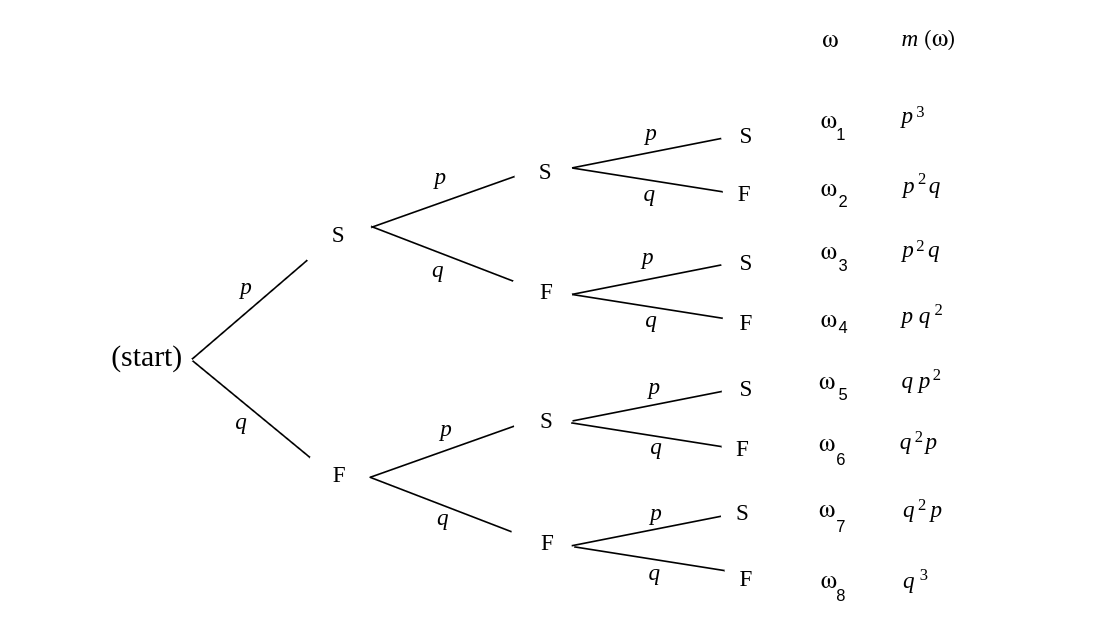

<div id="fig 3.7" class="d-flex justify-content-center"> | |||

[[File:guide_e6d15_PSfig3-7.png | 600px | thumb | Tree diagram of three Bernoulli trials. ]] | |||

</div> | |||

<span id="exam 3.9"/> | |||

'''Example''' | |||

<ul><li> A coin is tossed ten times. The two possible outcomes are heads and tails. The | |||

probability of heads on any one toss is 1/2. | |||

</li> | |||

<li> An opinion poll is carried out by asking 1000 people, randomly chosen from the | |||

population, if they favor the Equal Rights Amendment---the two outcomes being yes and no. The | |||

probability <math>p</math> of a yes answer (i.e., a success) indicates the proportion of people | |||

in the entire population that favor this amendment. | |||

</li> | |||

<li> A gambler makes a sequence of 1-dollar bets, betting each time on black at | |||

roulette at Las Vegas. Here a success is winning 1 dollar and a failure is losing 1 | |||

dollar. Since in American roulette the gambler wins if the ball stops on one of 18 | |||

out of 38 positions and loses otherwise, the probability of winning is <math>p = 18/38 = | |||

.474</math>. | |||

</li> | |||

</ul> | |||

To analyze a Bernoulli trials process, we choose as our sample space a binary tree and | |||

assign a probability distribution to the paths in this tree. Suppose, for example, that we | |||

have three Bernoulli trials. The possible outcomes are indicated in the tree diagram | |||

shown in [[#fig 3.7|Figure]]. We define <math>X</math> to be the random variable which | |||

represents the outcome of the process, i.e., an ordered triple of S's and F's. The | |||

probabilities assigned to the branches of the tree represent the probability for each | |||

individual trial. Let the outcome of the <math>i</math>th trial be denoted by the random | |||

variable | |||

<math>X_i</math>, with distribution function <math>m_i</math>. Since we have assumed that outcomes on any | |||

one trial do not affect those on another, we assign the same probabilities at each | |||

level of the tree. An outcome | |||

<math>\omega</math> for the entire experiment will be a path through the tree. For example, | |||

<math>\omega_3</math> represents the outcomes SFS. Our frequency interpretation of probability | |||

would lead us to expect a fraction <math>p</math> of successes on the first experiment; of these, | |||

a fraction <math>q</math> of failures on the second; and, of these, a fraction <math>p</math> of successes | |||

on the third experiment. This suggests assigning probability <math>pqp</math> to the outcome | |||

<math>\omega_3</math>. More generally, we assign a distribution function <math>m(\omega)</math> for | |||

paths <math>\omega</math> by defining <math>m(\omega)</math> to be the product of the branch probabilities | |||

along the path <math>\omega</math>. Thus, the probability that the three events S on the first | |||

trial, F on the second trial, and S on the third trial occur is the product of the | |||

probabilities for the individual events. We shall see in the next chapter that this | |||

means that the events involved are ''independent'' in the sense that the knowledge | |||

of one event does not affect our prediction for the occurrences of the other events. | |||

===Binomial Probabilities=== | |||

We shall be particularly interested in the probability that in <math>n</math> Bernoulli trials | |||

there are exactly <math>j</math> successes. We denote this probability by | |||

<math>b(n,p,j)</math>. Let us calculate the particular value <math>b(3,p,2)</math> from our tree measure. | |||

We see that there are three paths which have exactly two successes and one failure, | |||

namely <math>\omega_2</math>, <math>\omega_3</math>, and <math>\omega_5</math>. Each of these paths has the same | |||

probability <math>p^2q</math>. Thus <math>b(3,p,2) = 3p^2q</math>. Considering all possible numbers of | |||

successes we have | |||

<math display="block"> | |||

\begin{eqnarray*} | |||

b(3,p,0) &=& q^3\ ,\\ | |||

b(3,p,1) &=& 3pq^2\ ,\\ | |||

b(3,p,2) &=& 3p^2q\ ,\\ | |||

b(3,p,3) &=& p^3\ . | |||

\end{eqnarray*} | |||

</math> | |||

We can, in the same manner, carry out a tree measure for <math>n</math> experiments and determine | |||

<math>b(n,p,j)</math> for the general case of <math>n</math> Bernoulli trials. | |||

{{proofcard|Theorem|thm_3.8|Given <math>n</math> Bernoulli trials with probability <math>p</math> of | |||

success on each experiment, the probability of exactly <math>j</math> successes is | |||

<math display="block"> | |||

b(n,p,j) = {n \choose j} p^j q^{n - j} | |||

</math> | |||

where <math>q = 1 - p</math>.|We construct a tree measure as described above. We want to find the sum of the | |||

probabilities for all paths which have exactly <math>j</math> successes and <math>n - j</math> failures. | |||

Each such path is assigned a probability <math>p^j q^{n - j}</math>. How many such paths are | |||

there? To specify a path, we have to pick, from the <math>n</math> possible trials, a subset of | |||

<math>j</math> to be successes, with the remaining <math>n-j</math> outcomes being failures. We can do this | |||

in | |||

<math>n \choose j</math> ways. Thus the sum of the probabilities is | |||

<math display="block"> | |||

b(n,p,j) = {n \choose j} p^j q^{n - j}\ . | |||

</math>}} | |||

<span id="exam 3.10"/> | |||

'''Example''' | |||

probability that exactly three heads turn up? The answer is | |||

<math display="block"> | |||

b(6,.5,3) = {6 \choose 3} \left(\frac12\right)^3 \left(\frac12\right)^3 = 20 \cdot | |||

\frac1{64} = .3125\ . | |||

</math> | |||

<span id="exam 3.11"/> | |||

'''Example''' | |||

that we obtain exactly one 6? We treat this as Bernoulli trials with ''success'' | |||

= “rolling a 6” and ''failure'' = “rolling some number other than a 6.” Then | |||

<math>p = 1/6</math>, and the probability of exactly one success in four trials is | |||

<math display="block"> | |||

b(4,1/6,1) = {4 \choose 1 }\left(\frac16\right)^1 \left(\frac56\right)^3 = .386\ . | |||

</math> | |||

To compute binomial probabilities using the computer, multiply the function | |||

choose<math>(n,k)</math> by <math>p^kq^{n - k}</math>. The program '''BinomialProbabilities''' prints out the binomial probabilities | |||

<math>b(n, p, k)</math> for <math>k</math> between <math>kmin</math> and <math>kmax</math>, and the sum of these probabilities. We have run this program for <math>n = 100</math>, <math>p = 1/2</math>, <math>kmin = 45</math>, and <math>kmax = 55</math>; the output is shown in | |||

[[#table 3.27 |Table]]. Note that the individual probabilities are quite small. The probability of | |||

exactly 50 heads in 100 tosses of a coin is about .08. Our intuition tells us that this is the most | |||

likely outcome, which is correct; but, all the same, it is not a very likely outcome. | |||

<span id="table 3.27"/> | |||

{|class="table" | |||

|+ Binomial probabilities for <math>n = 100,\ p = 1/2</math>. | |||

|- | |||

|<math>k</math> || <math>b(n,p,k)</math> | |||

|- | |||

|45 || .0485 | |||

|- | |||

|46 || .0580 | |||

|- | |||

|47 || .0666 | |||

|- | |||

|48 || .0735 | |||

|- | |||

|49 || .0780 | |||

|- | |||

|50 || .0796 | |||

|- | |||

|51 || .0780 | |||

|- | |||

|52 || .0735 | |||

|- | |||

|53 || .0666 | |||

|- | |||

|54 || .0580 | |||

|- | |||

|55 || .0485 | |||

|} | |||

===Binomial Distributions=== | |||

{{defncard|label=|id=def 3.5|Let <math>n</math> be a positive integer, and let <math>p</math> be a real | |||

number between 0 and 1. Let <math>B</math> be the random variable which counts the number of | |||

successes in a Bernoulli trials process with parameters <math>n</math> and <math>p</math>. Then the | |||

distribution <math>b(n, p, k)</math> of <math>B</math> is called the ''binomial distribution''.}} | |||

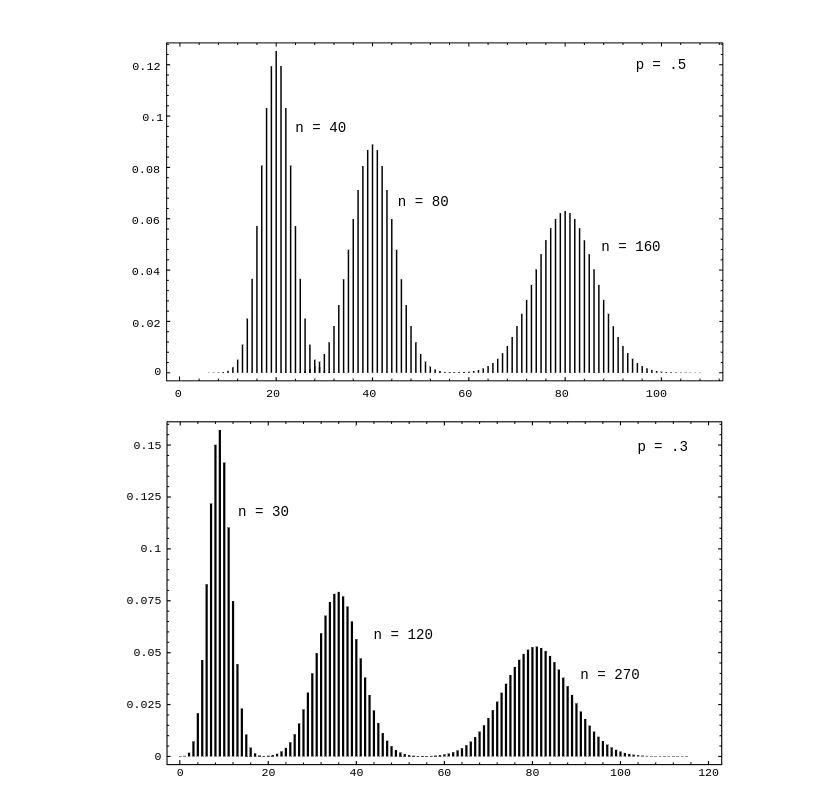

We can get a better idea about the binomial distribution by graphing this distribution | |||

for different values of <math>n</math> and <math>p</math> (see [[#fig 3.8|Figure]]). The plots in this figure | |||

were generated using the program ''' BinomialPlot'''. | |||

<div id="fig 3.8" class="d-flex justify-content-center"> | |||

[[File:guide_e6d15_PSfig3-8.png | 600px | thumb | Binomial distributions. ]] | |||

</div> | |||

We have run this program for <math>p = .5</math> and <math>p = .3</math>. Note that even for <math>p = .3</math> | |||

the graphs are quite symmetric. We shall have an explanation for this in | |||

[[guide:Ee45340c30#chp 9 |Chapter]]. We also note that the highest probability occurs around the | |||

value <math>np</math>, but that these highest probabilities get smaller as <math>n</math> increases. We | |||

shall see in Chapter [[guide:E4fd10ce73|Expected Value and Variance]] that <math>np</math> is the ''mean'' or ''expected'' | |||

value of the binomial distribution <math>b(n,p,k)</math>. | |||

The following example gives a nice way to see the binomial distribution, when <math>p = | |||

1/2</math>. | |||

<span id="exam 3.2.1"/> | |||

'''Example''' | |||

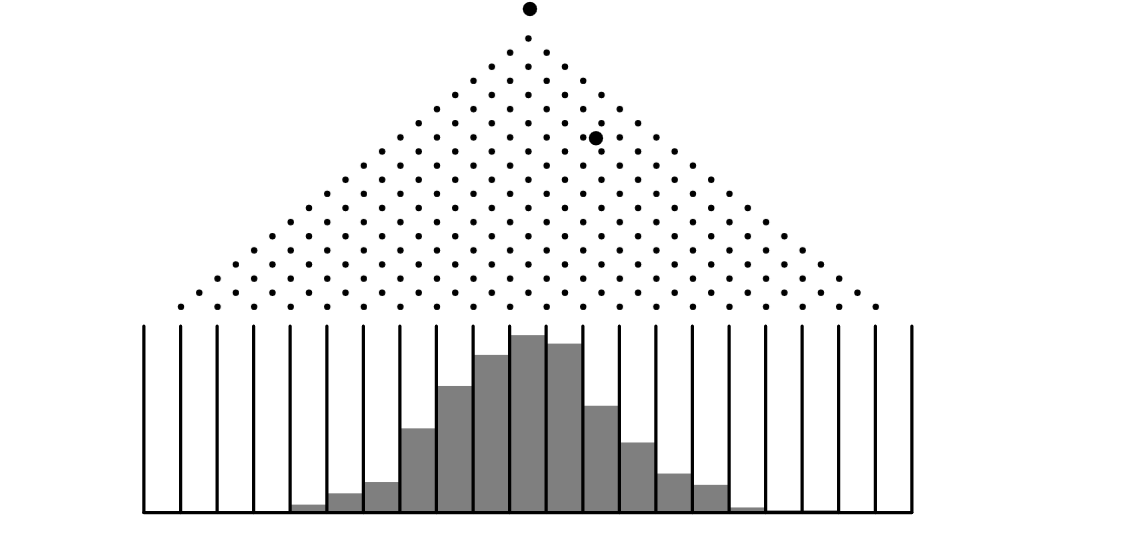

A ''Galton board'' is a board in which a large number of BB-shots are | |||

dropped from a chute at the top of the board and deflected off a number of pins | |||

on their way down to the bottom of the board. The final position of each slot | |||

is the result of a number of random deflections either to the left or the | |||

right. We have written a program ''' GaltonBoard''' to simulate this | |||

experiment. | |||

We have run the program for the case of 20 rows of pins and 10,00 shots being | |||

dropped. We show the result of this simulation in [[#fig 2.22|Figure]]. | |||

<div id="fig 2.22" class="d-flex justify-content-center"> | |||

[[File:guide_e6d15_PSfig2-22.png | 600px | thumb | Simulation of the Galton board. ]] | |||

</div> | |||

Note that if we write 0 every time the shot is deflected to the left, and 1 | |||

every time it is deflected to the right, then the path of the shot can be | |||

described by a sequence of 0's and 1's of length <math>n</math>, just as for the <math>n</math>-fold | |||

coin toss. | |||

The distribution shown in [[#fig 2.22|Figure]] is an example of an empirical | |||

distribution, in the sense that it comes about by means of a sequence of | |||

experiments. As expected, this empirical distribution resembles the corresponding | |||

binomial distribution with parameters <math>n = 20</math> and <math>p = 1/2</math>. | |||

===Hypothesis Testing=== | |||

<span id="exam 3.12"/> | |||

'''Example''' | |||

Suppose that ordinary aspirin has been found effective against headaches 60 percent of the time, and that a drug company claims | |||

that its new aspirin with a special headache additive is more effective. We can test this claim as follows: we call their claim the ''alternate hypothesis,'' and its negation, that the additive has no appreciable effect, the ''null hypothesis.'' Thus | |||

the null hypothesis is that <math>p = .6</math>, and the alternate hypothesis is that <math>p > .6</math>, | |||

where <math>p</math> is the probability that the new aspirin is effective. | |||

We give the aspirin to <math>n</math> people to take when they have a headache. We want to | |||

find a number <math>m</math>, called the ''critical value'' for our experiment, such that we | |||

reject the null hypothesis if at least <math>m</math> people are cured, and otherwise we accept | |||

it. How should we determine this critical value? | |||

First note that we can make two kinds of errors. The first, often called a ''type 1 error'' in statistics, is to reject the null | |||

hypothesis when in fact it is true. The second, called a ''type 2 error,'' is | |||

to accept the null hypothesis when it is false. To determine the probability of both these types of | |||

errors we introduce a function <math>\alpha(p)</math>, defined to be the | |||

probability that we reject the null hypothesis, where this probability is calculated under | |||

the assumption that the null hypothesis is true. In the present case, we have | |||

<math display="block"> | |||

\alpha(p) = \sum_{m \leq k \leq n} b(n,p,k)\ . | |||

</math> | |||

Note that <math>\alpha(.6)</math> is the probability of a type 1 error, since this is the | |||

probability of a high number of successes for an ineffective additive. So for a given | |||

<math>n</math> we want to choose <math>m</math> so as to make <math>\alpha(.6)</math> quite small, to reduce the | |||

likelihood of a type 1 error. But as <math>m</math> increases above the most probable value <math>np | |||

= .6n</math>, <math>\alpha(.6)</math>, being the upper tail of a binomial distribution, approaches 0. | |||

Thus ''increasing'' <math>m</math> makes a type 1 error less likely. | |||

Now suppose that the additive really is effective, so that <math>p</math> is appreciably | |||

greater than .6; say <math>p = .8</math>. (This alternative value of <math>p</math> is chosen arbitrarily; | |||

the following calculations depend on this choice.) Then choosing <math>m</math> well below <math>np = | |||

.8n</math> will increase <math>\alpha(.8)</math>, since now | |||

<math>\alpha(.8)</math> is all but the lower tail of a binomial distribution. Indeed, if we put | |||

<math>\beta(.8) = 1 - \alpha(.8)</math>, then <math>\beta(.8)</math> gives us the probability of a type 2 | |||

error, and so ''decreasing'' <math>m</math> makes a type 2 error less likely. | |||

The manufacturer would like to guard against a type 2 error, since if such an | |||

error is made, then the test does not show that the new drug is better, when in fact | |||

it is. If the alternative value of <math>p</math> is chosen closer to the value of <math>p</math> given in | |||

the null hypothesis (in this case <math>p =.6</math>), then for a given test population, the | |||

value of <math>\beta</math> will increase. | |||

So, if the manufacturer's statistician chooses an alternative value for <math>p</math> which is | |||

close to the value in the null hypothesis, then it will be an expensive proposition | |||

(i.e., the test population will have to be large) to reject the null hypothesis with a | |||

small value of <math>\beta</math>. | |||

What we hope to do then, for a given test population <math>n</math>, is to choose a value of | |||

<math>m</math>, if possible, which makes both these probabilities small. If we make a type 1 | |||

error we end up buying a lot of essentially ordinary aspirin at an inflated price; a | |||

type 2 error means we miss a bargain on a superior medication. Let us say that we | |||

want our critical number <math>m</math> to make each of these undesirable cases less than 5 | |||

percent probable. | |||

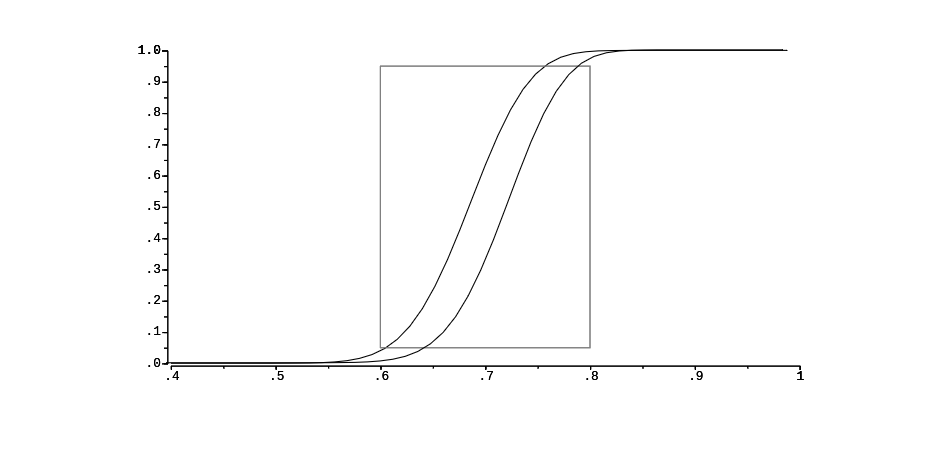

We write a program ''' PowerCurve''' to plot, for <math>n = 100</math> and | |||

selected values of | |||

<math>m</math>, the function <math>\alpha(p)</math>, for <math>p</math> ranging from .4 to 1. The result is shown in | |||

[[#fig 3.9|Figure]]. We include in our graph a box (in dotted lines) from .6 to .8, | |||

with bottom and top at heights .05 and .95. Then a value for <math>m</math> satisfies our | |||

requirements if and only if the graph of <math>\alpha</math> enters the box from the bottom, and | |||

leaves from the top (why?---which is the type 1 and which is the type 2 criterion?). | |||

As <math>m</math> increases, the graph of <math>\alpha</math> moves to the right. A few experiments have | |||

shown us that <math>m = 69</math> is the smallest value for | |||

<math>m</math> that thwarts a type 1 error, while <math>m = 73</math> is the largest which thwarts a | |||

type 2. So we may choose our critical value between 69 and 73. If we're more intent | |||

on avoiding a type 1 error we favor 73, and similarly we favor 69 if we regard a | |||

type 2 error as worse. Of course, the drug company may not be happy with having as | |||

much as a 5 percent chance of an error. They might insist on having a 1 percent chance of an error. For this we would have to increase the number <math>n</math> of trials (see [[exercise:Ad462f2fca |Exercise]]). | |||

<div id="fig 3.9" class="d-flex justify-content-center"> | |||

[[File:guide_e6d15_PSfig3-9.png | 600px | thumb | The power curve. ]] | |||

</div> | |||

===Binomial Expansion=== | |||

We next remind the reader of an application of the binomial coefficients to algebra. | |||

This is the ''binomial expansion,'' from which we get the term binomial coefficient. | |||

{{proofcard|Theorem|thm_3.9|''' (Binomial Theorem)''' The quantity <math>(a + | |||

b)^n</math> can be expressed in the form | |||

<math display="block"> | |||

(a + b)^n = \sum_{j = 0}^n {n \choose j} a^j b^{n - j}\ . | |||

</math>|To see that this expansion is correct, write | |||

<math display="block"> | |||

(a + b)^n = (a + b)(a + b) \cdots (a + b)\ . | |||

</math> | |||

When we multiply this out we will have a sum of terms each of which results from | |||

a choice of an <math>a</math> or <math>b</math> for each of <math>n</math> factors. When we choose <math>j</math> | |||

<math>a</math>'s and <math>(n - j)</math> <math>b</math>'s, | |||

we obtain a term of the form <math>a^j b^{n - j}</math>. To determine | |||

such a term, we have to specify | |||

<math>j</math> of the <math>n</math> terms in the product from which we | |||

choose the <math>a</math>. This can be done in <math>n \choose j</math> ways. Thus, collecting these | |||

terms in the sum contributes a term <math>{n \choose j} a^j b^{n - j}</math>.}} | |||

For example, we have | |||

<math display="block"> | |||

\begin{eqnarray*} (a + b)^0 & = & 1 \\ (a + b)^1 & = & a + b \\ (a + b)^2 & = & a^2 + | |||

2ab + b^2 \\ (a + b)^3 & = & a^3 + 3a^2b + 3ab^2 + b^3\ . | |||

\end{eqnarray*} | |||

</math> | |||

We see here that the coefficients of successive powers do indeed yield Pascal's triangle. | |||

{{proofcard|Corollary|cor_3.1|The sum of the elements in the <math>n</math>th row of | |||

Pascal's triangle is <math>2^n</math>. If the elements in the <math>n</math>th row of Pascal's triangle | |||

are added with alternating signs, the sum is 0.|The first statement in the corollary follows from the fact that | |||

<math display="block"> | |||

2^n = (1 + 1)^n = {n \choose 0} + {n \choose 1} + {n \choose 2} + \cdots + {n | |||

\choose n}\ , | |||

</math> | |||

and the second from the fact that | |||

<math display="block"> | |||

0 = (1 - 1)^n = {n \choose 0} - {n \choose 1} + {n \choose 2}- \cdots + {(-1)^n}{n | |||

\choose n}\ . | |||

</math>}} | |||

The first statement of the corollary tells us that the number of subsets of a set of | |||

<math>n</math> elements is <math>2^n</math>. We shall use the second statement in our next application of | |||

the binomial theorem. | |||

We have seen that, when <math>A</math> and <math>B</math> are any two events (cf. [[guide:C9e774ade5|Section]] ), | |||

<math display="block"> | |||

P(A \cup B) = P(A) + P(B) - P(A \cap B). | |||

</math> | |||

We now extend this theorem to a more general version, which will enable us to find | |||

the probability that at least one of a number of events occurs. | |||

===Inclusion-Exclusion Principle=== | |||

{{proofcard|Theorem|thm_3.10|Let <math>P</math> be a probability distribution on a sample space | |||

<math>\Omega</math>, and let | |||

<math>\{A_1,\ A_2,\ \dots,\ A_n\}</math> be a finite set of events. Then | |||

<math display="block"> | |||

P(A_1 \cup A_2 \cup \cdots \cup A_n) = \sum_{i = 1}^n P(A_i)\ - | |||

\sum_{1 \leq i < j \leq n} P(A_i \cap A_j) | |||

</math> | |||

<span id{{=}}"eq 3.5"/> | |||

<math display="block"> | |||

\begin{equation} | |||

\ \ \ \ \ \ \ \ \ \ \ \ \ \ \ \ \ \ \ \ \ \ \ \ \ \ \ \ \ \ \ \ \ \ \ \ \ \ \ \ \ \ \ | |||

\ | |||

\ \ \ \ \ \ \ \ \ \ \ \ \ + \sum_{1 \leq i < j < k \leq n} P(A_i \cap A_j \cap A_k) - | |||

\cdots\ .\label{eq 3.5} | |||

\end{equation} | |||

</math> | |||

That is, to find the probability that at least one of <math>n</math> events <math>A_i</math> occurs, first add the | |||

probability of each event, then subtract the probabilities of all possible two-way | |||

intersections, add the probability of all three-way intersections, and so forth.|If the outcome <math>\omega</math> occurs in at least one of the events <math>A_i</math>, its probability is added | |||

exactly once by the left side of Equation \ref{eq 3.5}. We must show that it is added | |||

exactly once by the right side of Equation \ref{eq 3.5}. Assume that <math>\omega</math> is in | |||

exactly <math>k</math> of the sets. Then its probability is added <math>k</math> times in the first term, | |||

subtracted <math>k \choose 2</math> times in the second, added <math>k \choose 3</math> times in the third | |||

term, and so forth. Thus, the total number of times that it is added is | |||

<math display="block"> | |||

{k \choose 1} - {k \choose 2} + {k \choose 3} - \cdots {(-1)^{k-1}} {k \choose k}\ . | |||

</math> | |||

But | |||

<math display="block"> | |||

0 = (1 - 1)^k = \sum_{j = 0}^k {k \choose j} (-1)^j = {k \choose 0} - \sum_{j = 1}^k | |||

{k \choose j} {(-1)^{j - 1}}\ . | |||

</math> | |||

Hence, | |||

<math display="block"> | |||

1 = {k \choose 0} = \sum_{j = 1}^k {k \choose j} {(-1)^{j - 1}}\ . | |||

</math> | |||

If the outcome <math>\omega</math> is not in any of the events <math>A_i</math>, then it is not counted on either side of | |||

the equation.}} | |||

===Hat Check Problem=== | |||

<span id="exam 3.13"/> | |||

'''Example''' | |||

We return to the hat check problem discussed in [[guide:1cf65e65b3|Section]], that is, the problem of finding the probability that a random | |||

permutation contains at least one fixed point. Recall that a permutation is a | |||

one-to-one map of a set | |||

<math>A = \{a_1,a_2,\dots,a_n\}</math> onto itself. Let <math>A_i</math> be the event that the <math>i</math>th | |||

element <math>a_i</math> remains fixed under this map. If we require that <math>a_i</math> is fixed, then | |||

the map of the remaining <math>n - 1</math> elements provides an arbitrary permutation of <math>(n - | |||

1)</math> objects. Since there are <math>(n - 1)!</math> such permutations, <math>P(A_i) = (n - 1)!/n! = | |||

1/n</math>. Since there are <math>n</math> choices for | |||

<math>a_i</math>, the first term of Equation \ref{eq 3.5} is 1. In | |||

the same way, to have a particular pair <math>(a_i,a_j)</math> fixed, we can choose any | |||

permutation of the remaining <math>n - 2</math> elements; there are <math>(n - 2)!</math> such choices and | |||

thus | |||

<math display="block"> | |||

P(A_i \cap A_j) = \frac{(n - 2)!}{n!} = \frac 1{n(n - 1)}\ . | |||

</math> | |||

The number of terms of this form in the right side of Equation \ref{eq 3.5} is | |||

<math display="block"> | |||

{n | |||

\choose 2} = \frac{n(n - 1)}{2!}\ . | |||

</math> | |||

Hence, the second term of Equation \ref{eq 3.5} is | |||

<math display="block"> | |||

-\frac{n(n - 1)}{2!} \cdot \frac 1{n(n - 1)} = -\frac 1{2!}\ . | |||

</math> | |||

Similarly, for any specific three events <math>A_i</math>, <math>A_j</math>, <math>A_k</math>, | |||

<math display="block"> | |||

P(A_i \cap A_j \cap A_k) = \frac{(n - 3)!}{n!} = \frac 1{n(n - 1)(n - 2)}\ , | |||

</math> | |||

and the number of such terms is | |||

<math display="block"> | |||

{n \choose 3} = \frac{n(n - 1)(n - 2)}{3!}\ , | |||

</math> | |||

making the third term of Equation \ref{eq 3.5} equal to | |||

1/3!. Continuing in this way, we obtain | |||

<math display="block"> | |||

P(\mbox {at\ least\ one\ fixed\ point}) = 1 - \frac 1{2!} + \frac 1{3!} - \cdots | |||

(-1)^{n-1} \frac 1{n!} | |||

</math> | |||

and | |||

<math display="block"> | |||

P(\mbox {no\ fixed\ point}) = \frac 1{2!} - \frac 1{3!} + \cdots (-1)^n \frac 1{n!}\ . | |||

</math> | |||

From calculus we learn that | |||

<math display="block"> | |||

e^x = 1 + x + \frac 1{2!}x^2 + \frac 1{3!}x^3 + \cdots + \frac 1{n!}x^n + \cdots\ . | |||

</math> | |||

Thus, if <math>x = -1</math>, we have | |||

<math display="block"> | |||

\begin{eqnarray*} e^{-1} & = &\frac 1{2!} - \frac 1{3!} + \cdots + \frac{(-1)^n}{n!} + | |||

\cdots \\ | |||

& = & .3678794\ . | |||

\end{eqnarray*} | |||

</math> | |||

Therefore, the probability that there is no fixed point, i.e., that | |||

none of the <math>n</math> people gets his own hat back, is equal to the sum of the first <math>n</math> | |||

terms in the expression for <math>e^{-1}</math>. This series converges very fast. Calculating | |||

the partial sums for <math>n = 3</math> to 10 gives the data in [[#table 3.7 |Table]]. | |||

<span id="table 3.7"/> | |||

{|class="table" | |||

|+ Hat check problem. | |||

|- | |||

||| Probability that no one | |||

|- | |||

|n || gets his own hat back | |||

|- | |||

|3 || .333333 | |||

|- | |||

|4 || .375 | |||

|- | |||

|5 || .366667 | |||

|- | |||

|6 || .368056 | |||

|- | |||

|7 || .367857 | |||

|- | |||

|8 || .367882 | |||

|- | |||

|9 || .367879 | |||

|- | |||

|10 || .367879 | |||

|} | |||

After <math>n = 9</math> the probabilities are essentially the same to six significant figures. | |||

Interestingly, the probability of no fixed point alternately increases and decreases | |||

as <math>n</math> increases. Finally, we note that our exact results are in good agreement with | |||

our simulations reported in the previous section. | |||

===Choosing a Sample Space=== | |||

We now have some of the tools needed to accurately describe sample spaces and to assign | |||

probability functions to those sample spaces. Nevertheless, in some cases, the | |||

description and assignment process is somewhat arbitrary. Of course, it is to be | |||

hoped that the description of the sample space and the subsequent assignment of a | |||

probability function will yield a model which accurately predicts what would happen if | |||

the experiment were actually carried out. As the following examples show, there are | |||

situations in which “reasonable” descriptions of the sample space do not produce a | |||

model which fits the data. | |||

In Feller's book,<ref group="Notes" >W. Feller, ''Introduction to Probability Theory and | |||

Its Applications'' vol. 1, 3rd ed. (New York: John Wiley and Sons, 1968), p. 41</ref> a pair | |||

of models is given which describe arrangements of certain kinds of elementary | |||

particles, such as photons and protons. It turns out that experiments | |||

have shown that certain types of elementary particles exhibit behavior which is accurately described | |||

by one model, called ''“Bose-Einstein statistics,”'' while other | |||

types of elementary particles can be modelled using ''“Fermi-Dirac | |||

statistics.”'' Feller says: | |||

<blockquote> We have here an instructive example of the impossibility of selecting or justifying probability models by ''a priori'' arguments. In fact, no pure reasoning could tell that photons and protons would not obey the same probability laws. | |||

</blockquote> | |||

We now give some examples of this description and assignment process. | |||

<span id="exam 3.14"/> | |||

'''Example''' | |||

In the quantum mechanical model of the helium atom, various | |||

parameters can be used to classify the energy states of the atom. In the triplet spin state (<math>S = | |||

1</math>) with orbital angular momentum 1 (<math>L = 1</math>), there are three possibilities, 0, 1, or 2, for | |||

the total angular momentum (<math>J</math>). (It is not assumed that the reader knows what any | |||

of this means; in fact, the example is more illustrative if the reader does ''not'' | |||

know anything about quantum mechanics.) We would like to assign probabilities to the | |||

three possibilities for <math>J</math>. The reader is undoubtedly resisting the idea of | |||

assigning the probability of <math>1/3</math> to each of these outcomes. She should now ask | |||

herself why she is resisting this assignment. The answer is probably because she | |||

does not have any “intuition” (i.e., experience) about the way in which helium atoms | |||

behave. In fact, in this example, the probabilities <math>1/9,\ 3/9,</math> and <math>5/9</math> are | |||

assigned by the theory. The theory gives these assignments because these frequencies | |||

were observed ''in experiments'' and further parameters were developed in the theory | |||

to allow these frequencies to be predicted. | |||

<span id="exam 3.15"/> | |||

'''Example''' | |||

Suppose two pennies are flipped once each. There are several “reasonable” ways to describe the sample space. One way is to count the | |||

number of heads in the outcome; in this case, the sample space can be written <math>\{0, 1, | |||

2\}</math>. Another description of the sample space is the set of all ordered pairs of | |||

<math>H</math>'s and <math>T</math>'s, i.e., | |||

<math display="block"> \{(H,H), (H, T), (T, H), (T, T)\}. </math> | |||

Both of these | |||

descriptions are accurate ones, but it is easy to see that (at most) one of these, if | |||

assigned a constant probability function, can claim to accurately model reality. In | |||

this case, as opposed to the preceding example, the reader will probably say that the | |||

second description, with each outcome being assigned a probability of <math>1/4</math>, is the | |||

“right” description. This conviction is due to experience; there is no proof that | |||

this is the way reality works. | |||

The reader is also referred to [[exercise:3603a3b6c0 |Exercise]] for another example of this process. | |||

===Historical Remarks=== | |||

The binomial coefficients have a long and colorful history leading up to Pascal's ''Treatise on the Arithmetical Triangle,''<ref group="Notes" >B. Pascal, ''Traité du Triangle Arithmétique'' (Paris: Desprez, 1665).</ref> where Pascal developed many important properties of these numbers. This history is set forth in the book ''Pascal's Arithmetical Triangle'' by A. W. F. Edwards.<ref group="Notes" >A. W. F. Edwards, ''Pascal's Arithmetical Triangle'' (London: Griffin, 1987).</ref> Pascal wrote his triangle in the form shown in [[#table 3.8 |Table]]. | |||

<span id="table 3.8"/> | |||

{| class="table table-borderless text-center" | |||

|+ Pascal's triangle. | |||

|- | |||

|1 || 1 || 1 || 1 || 1 || 1 || 1 || 1 || 1 || 1 | |||

|- | |||

| 1 || 2 || 3 || 4 || 5 || 6 || 7 || 8 || 9 | |||

|- | |||

| 1 || 3 || 6 || 10 || 15 || 21 || 28 || 36 | |||

|- | |||

| 1 || 4 || 10 || 20 || 35 || 56 || 84 | |||

|- | |||

| 1 || 5 || 15 || 35 || 70 || 126 | |||

|- | |||

| 1 || 6 || 21 || 56 || 126 | |||

|- | |||

| 1 || 7 || 28 || 84 | |||

|- | |||

| 1 || 8 || 36 | |||

|- | |||

| 1 || 9 | |||

|} | |||

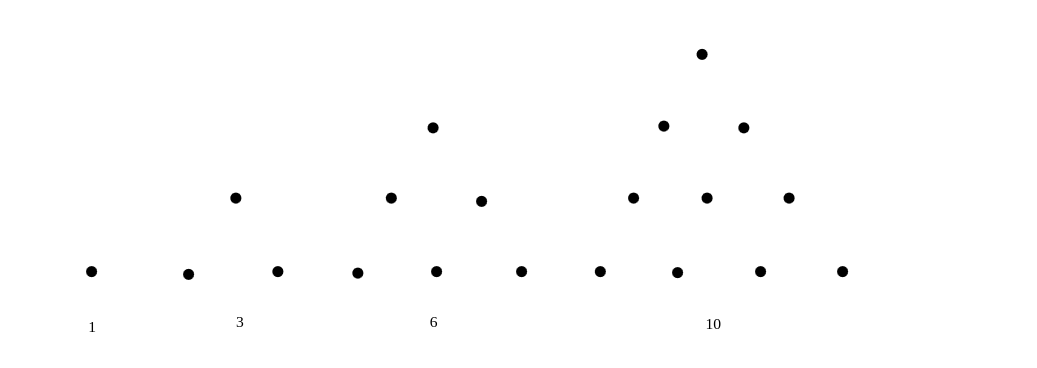

Edwards traces three different ways that the binomial coefficients arose. He refers to these as the ''figurate numbers,'' the ''combinatorial numbers,'' and the ''binomial numbers.'' They are all names for the same thing (which we have called binomial coefficients) but that they are all the same was not appreciated until the sixteenth century. The ''figurate numbers'' date back to the Pythagorean interest in number | |||

patterns around 540 BC. The Pythagoreans considered, for example, triangular patterns shown in [[#fig 3.10|Figure]]. The sequence of numbers | |||

<math display="block"> | |||

1, 3, 6, 10, \dots | |||

</math> | |||

obtained as the number of points in each triangle are called ''triangular numbers.'' From the triangles it is clear that the <math>n</math>th triangular | |||

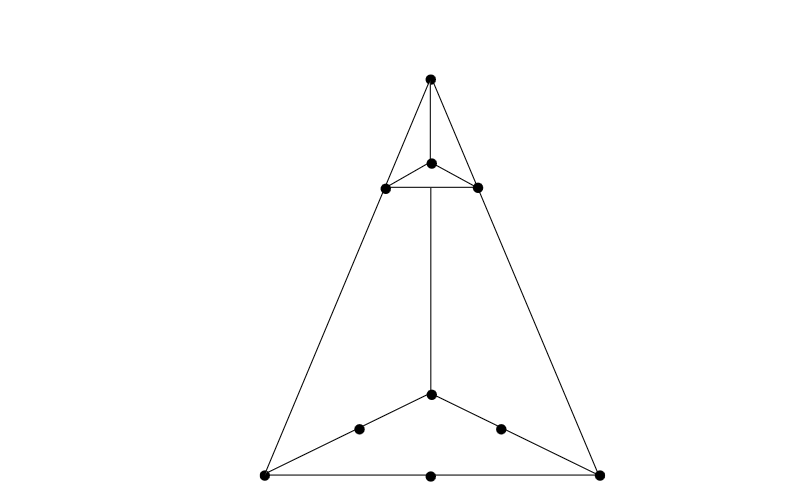

number is simply the sum of the first <math>n</math> integers. The ''tetrahedral numbers'' are the sums of the triangular numbers and were obtained by the Greek mathematicians Theon and Nicomachus at the beginning of the second | |||

century BC. The tetrahedral number 10, for example, has the geometric | |||

representation shown in [[#fig 3.11|Figure]]. The first three types of figurate numbers can be | |||

represented in tabular form as shown in [[#table 3.9 |Table]]. | |||

<div id="Pythagorean triangular patterns." class="d-flex justify-content-center"> | |||

[[File:guide_e6d15_PSfig3-10.png | 600px | thumb | Pythagorean triangular patterns. ]] | |||

</div> | |||

<div id="fig3.11" class="d-flex justify-content-center"> | |||

[[File:guide_e6d15_PSfig3-11.png | 600px | thumb |Geometric representation of the tetrahedral number 10. ]] | |||

</div> | |||

<span id="table 3.9"/> | |||

{| class="table" | |||

|- | |||

| Natural numbers || 1 || 2 || 3 || 4 || 5 || 6 || 7 || 8 || 9 | |||

|- | |||

|Triangular numbers || 1 || 3 || 6 || 10 || 15 || 21 || 28 || 36 || 45 | |||

|- | |||

| Tetrahedral numbers || 1 || 4 || 10 || 20 || 35 || 56 || 84 || 120 || 165 | |||

|} | |||

These numbers provide the first four rows of Pascal's triangle, but the table was | |||

not to be completed in the West until the sixteenth century. | |||

In the East, Hindu mathematicians began to encounter the binomial coefficients in | |||

combinatorial problems. Bhaskara in his ''Lilavati'' of 1150 gave a rule to find | |||

the number of medicinal preparations using 1, 2, 3, 4, 5, or 6 possible | |||

ingredients.<ref group="Notes" >ibid., p. 27.</ref> His rule is equivalent to our formula | |||

<math display="block"> | |||

{n \choose r} = \frac{(n)_r}{r!}\ . | |||

</math> | |||

<div id="fig 3.12" class="d-flex justify-content-center"> | |||

[[File:guide_e6d15_PSfig3-33.png | 400px | thumb | Chu Shih-chieh's triangle. [From J. Needham, ''Science | |||

and Civilization in China,'' vol. 3 (New York: Cambridge University Press, 1959), p. 135. | |||

Reprinted with permission. ]] | |||

</div> | |||

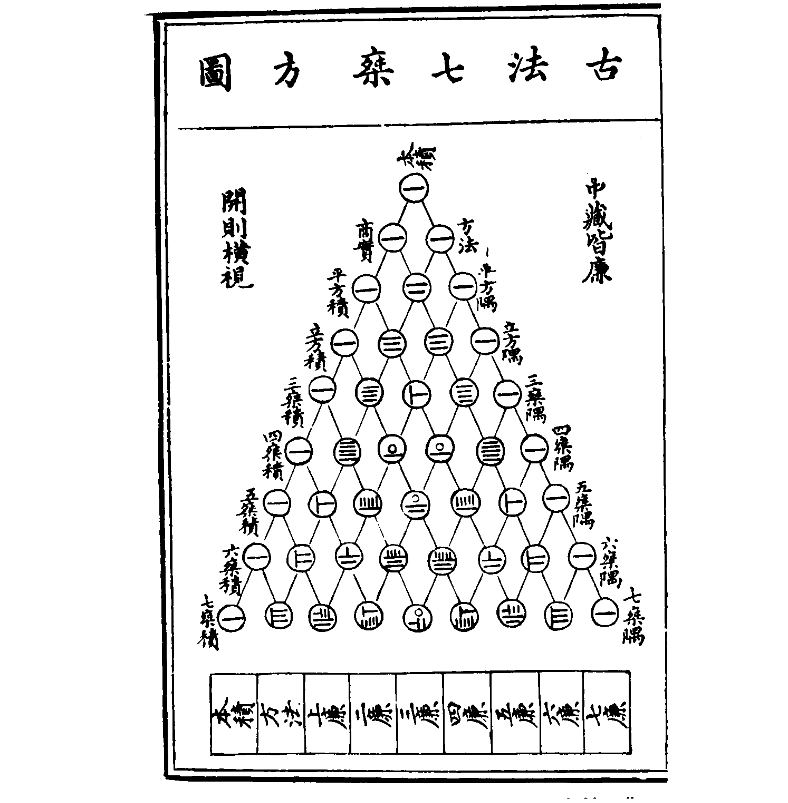

The binomial numbers as coefficients of <math>(a + b)^n</math> appeared in the works of | |||

mathematicians in China around 1100. There are references about this time to “the | |||

tabulation system for unlocking binomial coefficients.” The triangle to provide the | |||

coefficients up to the eighth power is given by Chu Shih-chieh in a book | |||

written around 1303 (see [[#fig 3.12|Figure]]).<ref group="Notes" >J. Needham, ''Science and | |||

Civilization in China,'' vol. 3 (New York: Cambridge University Press, 1959), p. 135.</ref> | |||

The original manuscript of Chu's book has been lost, but copies have survived. | |||

Edwards notes that there is an error in this copy of Chu's triangle. Can you find | |||

it? ('' Hint'': Two numbers which should be equal are not.) Other copies do not | |||

show this error. | |||

The first appearance of Pascal's triangle in the West seems to have come from | |||

calculations of Tartaglia in calculating the number of possible ways that <math>n</math> dice | |||

might turn up.<ref group="Notes" >N. Tartaglia, ''General Trattato di Numeri et | |||

Misure'' (Vinegia, 1556).</ref> For one die the answer is clearly 6. For two dice the | |||

possibilities may be displayed as shown in [[#table 3.10 |Table]]. | |||

<span id="table 3.10"/> | |||

{| class="table table-borderless text-center" | |||

|- | |||

| 11 | |||

|- | |||

| 12 || 22 | |||

|- | |||

|13 ||23 || 33 | |||

|- | |||

| 14 || 24 || 34 || 44 | |||

|- | |||

| 15 || 25 || 35|| 45 || 55 | |||

|- | |||

| 16 || 26 || 36 || 46 || 56 || 66 | |||

|} | |||

Displaying them this way suggests the sixth triangular number <math>1 + 2 + 3 + 4 + 5 + 6 = | |||

21</math> for the throw of 2 dice. Tartaglia “on the first day of Lent, 1523, in Verona, | |||

having thought about the problem all night,”<ref group="Notes" >Quoted in Edwards, op. cit., p. 37.</ref> realized | |||

that the extension of the figurate table gave the answers for <math>n</math> dice. The problem had suggested | |||

itself to Tartaglia from watching people casting their own horoscopes by means of a '' Book of | |||

Fortune,'' selecting verses by a process which included noting the numbers on the faces of three | |||

dice. The 56 ways that three dice can fall were set out on each page. The way the numbers were | |||

written in the book did not suggest the connection with figurate numbers, but a method | |||

of enumeration similar to the one we used for 2 dice does. Tartaglia's table was not | |||

published until 1556. | |||

A table for the binomial coefficients was published in 1554 by the German | |||

mathematician Stifel.<ref group="Notes" >M. Stifel, '' Arithmetica Integra'' (Norimburgae, | |||

1544).</ref> Pascal's triangle appears also in Cardano's ''Opus novum'' of | |||

1570.<ref group="Notes" >G. Cardano, ''Opus Novum de Proportionibus Numerorum'' (Basilea, | |||

1570).</ref> Cardano was interested in the problem of finding the number of ways to choose | |||

<math>r</math> objects out of <math>n</math>. Thus by the time of Pascal's work, his triangle had appeared | |||

as a result of looking at the figurate numbers, the combinatorial numbers, and the | |||

binomial numbers, and the fact that all three were the same was presumably pretty well | |||

understood. | |||

Pascal's interest in the binomial numbers came from his | |||

letters with Fermat concerning a problem known as the problem | |||

of points. This problem, and the correspondence between Pascal and | |||

Fermat, were discussed in Chapter [[guide:4f3a4e96c3|Discrete Probability Distributions]]. The reader will recall that this problem can be | |||

described as follows: Two players A and B are playing a sequence of games and the first player to win | |||

<math>n</math> games wins the match. It is desired to find the probability that A wins the match at a time when | |||

A has won <math>a</math> games and B has won <math>b</math> games. (See [[exercise:92bbd1fbc0|Exercise]] - [[exercise:1427ecdd1c|Exercise]].) | |||

Pascal solved the problem by backward induction, much the way we would do today in writing a | |||

computer program for its solution. He referred to the combinatorial method of Fermat which | |||

proceeds as follows: If A needs <math>c</math> games and B needs <math>d</math> games to win, we require that the | |||

players continue to play until they have played <math>c + d - 1</math> games. The winner in this | |||

extended series will be the same as the winner in the original series. The | |||

probability that A wins in the extended series and hence in the original series is | |||

<math display="block"> | |||

\sum_{r = c}^{c + d - 1} \frac 1{2^{c + d - 1}} {{c + d - 1} \choose r}\ . | |||

</math> | |||

Even at the time of the letters Pascal seemed to understand this formula. | |||

Suppose that the first player to win <math>n</math> games wins the match, and | |||

suppose that each player has put up a stake of <math>x</math>. Pascal studied the value of | |||

winning a particular game. By this he meant the increase in the expected winnings of | |||

the winner of the particular game under consideration. He showed that the value of | |||

the first game is | |||

<math display="block"> | |||

\frac {1\cdot3\cdot5\cdot\dots\cdot(2n - 1)}{2\cdot4\cdot6\cdot\dots\cdot(2n)}x\ . | |||

</math> | |||

His proof of this seems to use Fermat's formula and the fact that the above ratio | |||

of products of odd to products of even numbers is equal to the probability of exactly | |||

<math>n</math> heads in <math>2n</math> tosses of a coin. (See [[exercise:802078ad82 |Exercise]].) | |||

Pascal presented Fermat with the table shown in [[#table 3.11 |Table]]. | |||

<span id="table 3.11"/> | |||

{|class="table table-borderless" | |||

|+ Pascal's solution for the problem of points. | |||

|- | |||

| | |||

|colspan="6" class="text-center" |If each one staken 256 in | |||

|- | |||

|From my opponent's 256 positions I get, for the | |||

|class="border-bottom"|6 games | |||

|class="border-bottom" |5 games | |||

|class="border-bottom" |4 games | |||

|class="border-bottom" |3 games | |||

|class="border-bottom" |2 games | |||

|class="border-bottom" |1 game | |||

|- | |||

|1st game || 63 || 70 || 80 || 96 || 128 || 256 | |||

|- | |||

|2nd game || 63 || 70 || 80 || 96 || 128 | |||

|- | |||

|3rd game || 56 || 60 || 64 || 64 | |||

|- | |||

|4th game || 42 || 40 || 32 | |||

|- | |||

|5th game || 24 || 16 | |||

|- | |||

|6th game || 8 | |||

|} | |||

He states: | |||

<blockquote> | |||

You will see as always, that the value of the first game is equal to that of | |||

the second which is easily shown by combinations. You will see, in the same way, that | |||

the numbers in the first line are always increasing; so also are those in the second; | |||

and those in the third. But those in the fourth line are decreasing, and those in the | |||

fifth, etc. This seems odd.<ref group="Notes" >F. N. David, op. cit., p. 235.</ref> | |||

</blockquote> | |||

The student can pursue this question further using the computer and Pascal's | |||

backward iteration method for computing the expected payoff at any point in the series. | |||

In his treatise, Pascal gave a formal proof of Fermat's combinatorial formula as | |||

well as proofs of many other basic properties of binomial numbers. Many of his proofs | |||

involved induction and represent some of the first proofs by this method. His book | |||

brought together all the different aspects of the numbers in the Pascal triangle as | |||

known in 1654, and, as Edwards states, “That the Arithmetical Triangle should bear | |||

Pascal's name cannot be disputed.”<ref group="Notes" >A. W. F. Edwards, op. cit., p. ix.</ref> | |||

The first serious study of the binomial distribution was undertaken by James Bernoulli in his ''Ars Conjectandi'' published in 1713.<ref group="Notes" >J. Bernoulli, ''Ars Conjectandi'' (Basil: Thurnisiorum, 1713).</ref> We shall return to this work in the historical remarks in Chapter [[guide:1cf65e65b3|Law of Large Numbers]]. | |||

==General references== | |||

{{cite web |url=https://math.dartmouth.edu/~prob/prob/prob.pdf |title=Grinstead and Snell’s Introduction to Probability |last=Doyle |first=Peter G.|date=2006 |access-date=June 6, 2024}} | |||

==Notes== | |||

{{Reflist|group=Notes}} | |||

Latest revision as of 03:42, 12 June 2024

Having mastered permutations, we now consider combinations. Let [math]U[/math] be a set with [math]n[/math] elements; we want to count the number of distinct subsets of the set [math]U[/math] that have exactly [math]j[/math] elements. The empty set and the set [math]U[/math] are considered to be subsets of [math]U[/math]. The empty set is usually denoted by [math]\phi[/math].

Example Let [math]U = \{a,b,c\}[/math]. The subsets of [math]U[/math] are

Binomial Coefficients

The number of distinct subsets with [math]j[/math] elements that can be chosen from a set with [math]n[/math] elements is denoted by [math]{n \choose j}[/math], and is pronounced “[math]n[/math] choose [math]j[/math].” The number [math]n \choose j[/math] is called a binomial coefficient. This terminology comes from an application to algebra which will be discussed later in this section. In the above example, there is one subset with no elements, three subsets with exactly 1 element, three subsets with exactly 2 elements, and one subset with exactly 3 elements. Thus, [math]{3 \choose 0} = 1[/math], [math]{3 \choose 1} = 3[/math], [math]{3 \choose 2} = 3[/math], and [math]{3 \choose 3} = 1[/math]. Note that there are [math]2^3 = 8[/math] subsets in all. (We have already seen that a set with [math]n[/math] elements has [math]2^n[/math] subsets; (see Exercise). It follows that

Assume that [math]n \gt 0[/math]. Then, since there is only one way to choose a set with no elements and only one way to choose a set with [math]n[/math] elements, the remaining values of [math]n \choose j[/math] are determined by the following recurrence relation:

For integers [math]n[/math] and [math]j[/math], with [math]0 \lt j \lt n[/math], the binomial coefficients satisfy:

We wish to choose a subset of [math]j[/math] elements. Choose an element [math]u[/math] of [math]U[/math]. Assume first that we do not want [math]u[/math] in the subset. Then we must choose the [math]j[/math] elements from a set of [math]n - 1[/math] elements; this can be done in [math]{{n-1} \choose j}[/math] ways. On the other hand, assume that we do want [math]u[/math] in the subset. Then we must choose the other [math]j - 1[/math] elements from the remaining [math]n - 1[/math] elements of [math]U[/math]; this can be done in [math]{{n-1} \choose {j - 1}}[/math] ways. Since [math]u[/math] is either in our subset or not, the number of ways that we can choose a subset of [math]j[/math] elements is the sum of the number of subsets of [math]j[/math] elements which have [math]u[/math] as a member and the number which do not---this is what Equation \ref{eq 3.3} states.

The binomial coefficient [math]n \choose j[/math] is defined to be 0, if [math]j \lt 0[/math] or if [math]j \gt n[/math]. With this definition, the restrictions on [math]j[/math] in Theorem are unnecessary.

Pascal's Triangle

The relation, together with the knowledge that

determines completely the numbers [math]n \choose j[/math]. We can use these relations to determine the famous triangle of Pascal, which exhibits all these numbers in matrix form (see Figure).

The [math]n[/math]th row of this triangle has the entries [math]n \choose 0[/math], [math]n \choose 1[/math],..., [math]n \choose n[/math]. We know that the first and last of these numbers are 1. The remaining numbers are determined by the recurrence relation Equation \ref{eq 3.3}; that is, the entry [math]{n \choose j}[/math] for [math]0 \lt j \lt n[/math] in the [math]n[/math]th row of Pascal's triangle is the sum of the entry immediately above and the one immediately to its left in the [math](n - 1)[/math]st row. For example, [math]{5 \choose 2} = 6 + 4 = 10[/math]. This algorithm for constructing Pascal's triangle can be used to write a computer program to compute the binomial coefficients. You are asked to do this in Exercise.

While Pascal's triangle provides a way to construct recursively the binomial coefficients, it is also possible to give a formula for [math]n \choose j[/math].

The binomial coefficients are given by the formula

Each subset of size [math]j[/math] of a set of size [math]n[/math] can be ordered in [math]j![/math] ways. Each of these orderings is a [math]j[/math]-permutation of the set of size [math]n[/math]. The number of [math]j[/math]-permutations is [math](n)_j[/math], so the number of subsets of size [math]j[/math] is

The above formula can be rewritten in the form

This immediately shows that

When using Equation \ref{eq 3.4} in the calculation of [math]{n \choose j}[/math], if one alternates the multiplications and divisions, then all of the intermediate values in the calculation are integers. Furthermore, none of these intermediate values exceed the final value. (See Exercise.)

Another point that should be made concerning Equation \ref{eq 3.4} is that if it is

used to define the binomial coefficients, then it is no longer necessary to require

[math]n[/math] to be a positive integer. The variable [math]j[/math] must still be a non-negative integer under

this definition. This idea is useful when extending the Binomial Theorem to general exponents.

(The Binomial Theorem for non-negative integer exponents is given below as Theorem.)

Poker Hands

Example kind beats a full house. A poker hand is a random subset of 5 elements from a deck of 52 cards. A hand has four of a kind if it has four cards with the same value---for example, four sixes or four kings. It is a full house if it has three of one value and two of a second---for example, three twos and two queens. Let us see which hand is more likely. How many hands have four of a kind? There are 13 ways that we can specify the value for the four cards. For each of these, there are 48 possibilities for the fifth card. Thus, the number of four-of-a-kind hands is [math]13 \cdot 48 = 624[/math]. Since the total number of possible hands is [math]{52 \choose 5} = 2598960[/math], the probability of a hand with four of a kind is [math]624/2598960 = .00024[/math].

Now consider the case of a full house; how many such hands are there? There are 13

choices for the value which occurs three times; for each of these there are

[math]{4 \choose 3} = 4[/math] choices for the particular three cards of this value that are in

the hand. Having picked these three cards, there are 12 possibilities for the value

which occurs twice; for each of these there are [math]{4 \choose 2} = 6[/math] possibilities for

the particular pair of this value. Thus, the number of full houses is [math]13 \cdot 4

\cdot 12 \cdot 6 = 3744[/math], and the probability of obtaining a hand with a full house is

[math]3744/2598960 = .0014[/math]. Thus, while both types of hands are unlikely, you are six

times more likely to obtain a full house than four of a kind.

Bernoulli Trials

Our principal use of the binomial coefficients will occur in the study of one of the important chance processes called Bernoulli trials.

A Bernoulli trials process is a sequence of [math]n[/math] chance experiments such that

- Each experiment has two possible outcomes, which we may call success and failure.

- The probability [math]p[/math] of success on each experiment is the same for each experiment, and this probability is not affected by any knowledge of previous outcomes. The probability [math]q[/math] of failure is given by [math]q = 1 - p[/math].

Example

- A coin is tossed ten times. The two possible outcomes are heads and tails. The probability of heads on any one toss is 1/2.

- An opinion poll is carried out by asking 1000 people, randomly chosen from the population, if they favor the Equal Rights Amendment---the two outcomes being yes and no. The probability [math]p[/math] of a yes answer (i.e., a success) indicates the proportion of people in the entire population that favor this amendment.

- A gambler makes a sequence of 1-dollar bets, betting each time on black at roulette at Las Vegas. Here a success is winning 1 dollar and a failure is losing 1 dollar. Since in American roulette the gambler wins if the ball stops on one of 18 out of 38 positions and loses otherwise, the probability of winning is [math]p = 18/38 = .474[/math].

To analyze a Bernoulli trials process, we choose as our sample space a binary tree and assign a probability distribution to the paths in this tree. Suppose, for example, that we have three Bernoulli trials. The possible outcomes are indicated in the tree diagram shown in Figure. We define [math]X[/math] to be the random variable which represents the outcome of the process, i.e., an ordered triple of S's and F's. The probabilities assigned to the branches of the tree represent the probability for each individual trial. Let the outcome of the [math]i[/math]th trial be denoted by the random variable [math]X_i[/math], with distribution function [math]m_i[/math]. Since we have assumed that outcomes on any one trial do not affect those on another, we assign the same probabilities at each level of the tree. An outcome [math]\omega[/math] for the entire experiment will be a path through the tree. For example, [math]\omega_3[/math] represents the outcomes SFS. Our frequency interpretation of probability would lead us to expect a fraction [math]p[/math] of successes on the first experiment; of these, a fraction [math]q[/math] of failures on the second; and, of these, a fraction [math]p[/math] of successes on the third experiment. This suggests assigning probability [math]pqp[/math] to the outcome [math]\omega_3[/math]. More generally, we assign a distribution function [math]m(\omega)[/math] for paths [math]\omega[/math] by defining [math]m(\omega)[/math] to be the product of the branch probabilities along the path [math]\omega[/math]. Thus, the probability that the three events S on the first trial, F on the second trial, and S on the third trial occur is the product of the probabilities for the individual events. We shall see in the next chapter that this means that the events involved are independent in the sense that the knowledge of one event does not affect our prediction for the occurrences of the other events.

Binomial Probabilities

We shall be particularly interested in the probability that in [math]n[/math] Bernoulli trials there are exactly [math]j[/math] successes. We denote this probability by [math]b(n,p,j)[/math]. Let us calculate the particular value [math]b(3,p,2)[/math] from our tree measure. We see that there are three paths which have exactly two successes and one failure, namely [math]\omega_2[/math], [math]\omega_3[/math], and [math]\omega_5[/math]. Each of these paths has the same probability [math]p^2q[/math]. Thus [math]b(3,p,2) = 3p^2q[/math]. Considering all possible numbers of successes we have

We can, in the same manner, carry out a tree measure for [math]n[/math] experiments and determine [math]b(n,p,j)[/math] for the general case of [math]n[/math] Bernoulli trials.

Given [math]n[/math] Bernoulli trials with probability [math]p[/math] of success on each experiment, the probability of exactly [math]j[/math] successes is

We construct a tree measure as described above. We want to find the sum of the probabilities for all paths which have exactly [math]j[/math] successes and [math]n - j[/math] failures. Each such path is assigned a probability [math]p^j q^{n - j}[/math]. How many such paths are there? To specify a path, we have to pick, from the [math]n[/math] possible trials, a subset of [math]j[/math] to be successes, with the remaining [math]n-j[/math] outcomes being failures. We can do this in [math]n \choose j[/math] ways. Thus the sum of the probabilities is

Example probability that exactly three heads turn up? The answer is

Example that we obtain exactly one 6? We treat this as Bernoulli trials with success = “rolling a 6” and failure = “rolling some number other than a 6.” Then [math]p = 1/6[/math], and the probability of exactly one success in four trials is

To compute binomial probabilities using the computer, multiply the function choose[math](n,k)[/math] by [math]p^kq^{n - k}[/math]. The program BinomialProbabilities prints out the binomial probabilities [math]b(n, p, k)[/math] for [math]k[/math] between [math]kmin[/math] and [math]kmax[/math], and the sum of these probabilities. We have run this program for [math]n = 100[/math], [math]p = 1/2[/math], [math]kmin = 45[/math], and [math]kmax = 55[/math]; the output is shown in Table. Note that the individual probabilities are quite small. The probability of exactly 50 heads in 100 tosses of a coin is about .08. Our intuition tells us that this is the most likely outcome, which is correct; but, all the same, it is not a very likely outcome.

| [math]k[/math] | [math]b(n,p,k)[/math] |

| 45 | .0485 |

| 46 | .0580 |

| 47 | .0666 |

| 48 | .0735 |

| 49 | .0780 |

| 50 | .0796 |

| 51 | .0780 |

| 52 | .0735 |

| 53 | .0666 |

| 54 | .0580 |

| 55 | .0485 |

Binomial Distributions

Let [math]n[/math] be a positive integer, and let [math]p[/math] be a real number between 0 and 1. Let [math]B[/math] be the random variable which counts the number of successes in a Bernoulli trials process with parameters [math]n[/math] and [math]p[/math]. Then the distribution [math]b(n, p, k)[/math] of [math]B[/math] is called the binomial distribution.

We can get a better idea about the binomial distribution by graphing this distribution for different values of [math]n[/math] and [math]p[/math] (see Figure). The plots in this figure were generated using the program BinomialPlot.

We have run this program for [math]p = .5[/math] and [math]p = .3[/math]. Note that even for [math]p = .3[/math]

the graphs are quite symmetric. We shall have an explanation for this in

Chapter. We also note that the highest probability occurs around the

value [math]np[/math], but that these highest probabilities get smaller as [math]n[/math] increases. We

shall see in Chapter Expected Value and Variance that [math]np[/math] is the mean or expected

value of the binomial distribution [math]b(n,p,k)[/math].

The following example gives a nice way to see the binomial distribution, when [math]p =

1/2[/math].

Example

A Galton board is a board in which a large number of BB-shots are

dropped from a chute at the top of the board and deflected off a number of pins

on their way down to the bottom of the board. The final position of each slot

is the result of a number of random deflections either to the left or the

right. We have written a program GaltonBoard to simulate this

experiment.

We have run the program for the case of 20 rows of pins and 10,00 shots being

dropped. We show the result of this simulation in Figure.

Note that if we write 0 every time the shot is deflected to the left, and 1 every time it is deflected to the right, then the path of the shot can be described by a sequence of 0's and 1's of length [math]n[/math], just as for the [math]n[/math]-fold coin toss.

The distribution shown in Figure is an example of an empirical

distribution, in the sense that it comes about by means of a sequence of

experiments. As expected, this empirical distribution resembles the corresponding

binomial distribution with parameters [math]n = 20[/math] and [math]p = 1/2[/math].

Hypothesis Testing

Example Suppose that ordinary aspirin has been found effective against headaches 60 percent of the time, and that a drug company claims that its new aspirin with a special headache additive is more effective. We can test this claim as follows: we call their claim the alternate hypothesis, and its negation, that the additive has no appreciable effect, the null hypothesis. Thus the null hypothesis is that [math]p = .6[/math], and the alternate hypothesis is that [math]p \gt .6[/math], where [math]p[/math] is the probability that the new aspirin is effective.

We give the aspirin to [math]n[/math] people to take when they have a headache. We want to find a number [math]m[/math], called the critical value for our experiment, such that we reject the null hypothesis if at least [math]m[/math] people are cured, and otherwise we accept it. How should we determine this critical value?

First note that we can make two kinds of errors. The first, often called a type 1 error in statistics, is to reject the null hypothesis when in fact it is true. The second, called a type 2 error, is to accept the null hypothesis when it is false. To determine the probability of both these types of errors we introduce a function [math]\alpha(p)[/math], defined to be the probability that we reject the null hypothesis, where this probability is calculated under the assumption that the null hypothesis is true. In the present case, we have

Note that [math]\alpha(.6)[/math] is the probability of a type 1 error, since this is the

probability of a high number of successes for an ineffective additive. So for a given

[math]n[/math] we want to choose [math]m[/math] so as to make [math]\alpha(.6)[/math] quite small, to reduce the

likelihood of a type 1 error. But as [math]m[/math] increases above the most probable value [math]np

= .6n[/math], [math]\alpha(.6)[/math], being the upper tail of a binomial distribution, approaches 0.

Thus increasing [math]m[/math] makes a type 1 error less likely.

Now suppose that the additive really is effective, so that [math]p[/math] is appreciably

greater than .6; say [math]p = .8[/math]. (This alternative value of [math]p[/math] is chosen arbitrarily;

the following calculations depend on this choice.) Then choosing [math]m[/math] well below [math]np =

.8n[/math] will increase [math]\alpha(.8)[/math], since now

[math]\alpha(.8)[/math] is all but the lower tail of a binomial distribution. Indeed, if we put

[math]\beta(.8) = 1 - \alpha(.8)[/math], then [math]\beta(.8)[/math] gives us the probability of a type 2

error, and so decreasing [math]m[/math] makes a type 2 error less likely.

The manufacturer would like to guard against a type 2 error, since if such an

error is made, then the test does not show that the new drug is better, when in fact

it is. If the alternative value of [math]p[/math] is chosen closer to the value of [math]p[/math] given in

the null hypothesis (in this case [math]p =.6[/math]), then for a given test population, the

value of [math]\beta[/math] will increase.

So, if the manufacturer's statistician chooses an alternative value for [math]p[/math] which is

close to the value in the null hypothesis, then it will be an expensive proposition

(i.e., the test population will have to be large) to reject the null hypothesis with a

small value of [math]\beta[/math].

What we hope to do then, for a given test population [math]n[/math], is to choose a value of

[math]m[/math], if possible, which makes both these probabilities small. If we make a type 1

error we end up buying a lot of essentially ordinary aspirin at an inflated price; a

type 2 error means we miss a bargain on a superior medication. Let us say that we

want our critical number [math]m[/math] to make each of these undesirable cases less than 5

percent probable.

We write a program PowerCurve to plot, for [math]n = 100[/math] and

selected values of

[math]m[/math], the function [math]\alpha(p)[/math], for [math]p[/math] ranging from .4 to 1. The result is shown in

Figure. We include in our graph a box (in dotted lines) from .6 to .8,

with bottom and top at heights .05 and .95. Then a value for [math]m[/math] satisfies our

requirements if and only if the graph of [math]\alpha[/math] enters the box from the bottom, and

leaves from the top (why?---which is the type 1 and which is the type 2 criterion?).

As [math]m[/math] increases, the graph of [math]\alpha[/math] moves to the right. A few experiments have

shown us that [math]m = 69[/math] is the smallest value for

[math]m[/math] that thwarts a type 1 error, while [math]m = 73[/math] is the largest which thwarts a

type 2. So we may choose our critical value between 69 and 73. If we're more intent

on avoiding a type 1 error we favor 73, and similarly we favor 69 if we regard a

type 2 error as worse. Of course, the drug company may not be happy with having as

much as a 5 percent chance of an error. They might insist on having a 1 percent chance of an error. For this we would have to increase the number [math]n[/math] of trials (see Exercise).

Binomial Expansion

We next remind the reader of an application of the binomial coefficients to algebra. This is the binomial expansion, from which we get the term binomial coefficient.

(Binomial Theorem) The quantity [math](a + b)^n[/math] can be expressed in the form

To see that this expansion is correct, write

For example, we have

We see here that the coefficients of successive powers do indeed yield Pascal's triangle.

The sum of the elements in the [math]n[/math]th row of Pascal's triangle is [math]2^n[/math]. If the elements in the [math]n[/math]th row of Pascal's triangle are added with alternating signs, the sum is 0.

Show ProofThe first statement in the corollary follows from the fact that

The first statement of the corollary tells us that the number of subsets of a set of [math]n[/math] elements is [math]2^n[/math]. We shall use the second statement in our next application of the binomial theorem. We have seen that, when [math]A[/math] and [math]B[/math] are any two events (cf. Section ),

We now extend this theorem to a more general version, which will enable us to find the probability that at least one of a number of events occurs.

Inclusion-Exclusion Principle

Let [math]P[/math] be a probability distribution on a sample space [math]\Omega[/math], and let [math]\{A_1,\ A_2,\ \dots,\ A_n\}[/math] be a finite set of events. Then

If the outcome [math]\omega[/math] occurs in at least one of the events [math]A_i[/math], its probability is added exactly once by the left side of Equation \ref{eq 3.5}. We must show that it is added exactly once by the right side of Equation \ref{eq 3.5}. Assume that [math]\omega[/math] is in exactly [math]k[/math] of the sets. Then its probability is added [math]k[/math] times in the first term, subtracted [math]k \choose 2[/math] times in the second, added [math]k \choose 3[/math] times in the third term, and so forth. Thus, the total number of times that it is added is

Hat Check Problem