Concentration of measure

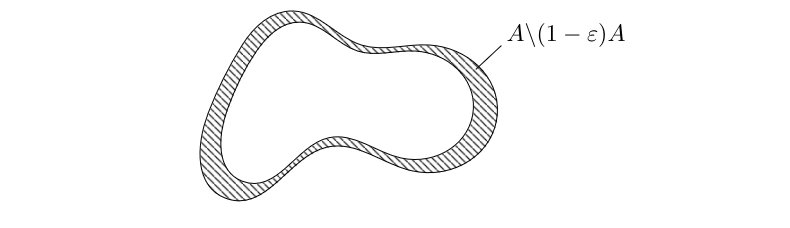

label{Ch-VC} In this chapter we denote by [math]\lambda^d[/math] the [math]d[/math]-dimensional Lebesgue measure on [math]\mathbb{R}^d[/math]. The euclidean norm we denote by [math]\|\cdot\|[/math] and balls with respect to this norm are denoted by [math]\B_r(x)[/math] and [math]\Bbar_r(x)[/math] respectively. \smallskip We start with the following observation. Let [math]A\subseteq\mathbb{R}^d[/math] be a measurable star-shaped set and assume that zero is a center. Then [math](1-\epsilon)A\subseteq A[/math] and we can interpret [math]A\backslash(1-\epsilon)A[/math] as that part of [math]A[/math] that is close to its surface. \begin{center} \begin{picture}(150,80)(0,0)

\put(0,0){

} \put(9,8){

}

\end{picture} \nopagebreak[4] \begin{fig}Volume close to the surface of a ‘nice’ subset of [math]\mathbb{R}^2[/math].\end{fig} \end{center} If we compute the measure of the latter set, then we get

and thus

which will be close to one if [math]\epsilon[/math] is small and [math]d[/math] is large. This means that in this case the measure of [math]A[/math] is located close to the surface of [math]A[/math] whereas its center contributes only little to its volume. Our first aim in this section is to quantify the latter observation in the case that [math]A[/math] is the unit ball. \smallskip

For [math]d\geqslant 1[/math] and [math]0 \lt \epsilon\leqslant1[/math] we have [math](1-\epsilon)^d\leqslant \exp(-\epsilon d)[/math].

Define [math]f\colon[0,1]\rightarrow\mathbb{R}[/math] via [math]f(x)=\exp(-x)+x-1[/math]. Then [math]f(0)=0[/math] and [math]f'(x)=1-\exp(-x)\geqslant0[/math] holds for all [math]x\in[0,1][/math]. Therefore [math]f(x)\geqslant0[/math] and thus [math]\exp(-x)\geqslant1-x\geqslant0[/math] holds for all [math]x\in[0,1][/math]. Evaluating at [math]x=\epsilon[/math] and taking the [math]d[/math]--th power finishes the proof.

Using the lemma we can now prove that most of the unit ball's volume is located close to its surface. This is very reasonable, since if we think of the ball consisting of many thin shells all of the same depth but with different radii, then the outer ones will have (in high dimensions significantly!) larger volume then the inner ones.

We compute

{{{4}}}

For the second concentration theorem we need the following lemma.

For [math]d\geqslant3[/math] we have [math]\lambda^d(\Bbar_1(0))=\frac{2\pi}{d}\cdot\lambda^{d-2}(\Bbar_1(0))[/math] where the unit balls are taken in [math]\mathbb{R}^d[/math] and [math]\mathbb{R}^{d-2}[/math], respectively.

Since [math]\B_1(0)=\{(x_1,\dots,x_d)\in\mathbb{R}^d\:;\:x_1^2+\cdots+x_d^2\leqslant1\}[/math] we may compute

After we have seen that most of the volume of the unit ball is concentrated close to its surface we show in the next result that if we designate one point of the surface as the north pole, then most of the unit ball's volume will be concentrated at its waist. As with the surface concentration, this is not surprising, since if we go from the center in the direction of the north pole, then the ball becomes thinner in all of the [math]d-1[/math] remaining dimensions. The precise result is as follows.

{{{3}}}

Show Proof{{{4}}}

The estimate in Theorem is only of value if the factor on the right hand side is strictly positive. The following lemma explains when this happens.

There exists a unique [math]a_0 \gt 0[/math] such that [math]\frac{2}{a}\exp(-\frac{a^2}{2}) \lt 1[/math] holds for every [math]a \gt a_0[/math]. We have [math]a_0\in(1,2)[/math].

The function [math]f\colon(0,\infty)\rightarrow\mathbb{R}[/math], [math]f(a)=\frac{2}{a}\exp(-\frac{a^2}{2})[/math] is strictly decreasing as both of its factors are strictly decreasing and strictly positive. We have [math]f(1)=2/\sqrt{e} \gt 1[/math] and [math]f(2)=1/\e^2 \lt 1[/math]. Consequently there is [math]a_0 \gt 0[/math] such that [math]f(a_0)=1[/math] and necessarily [math]1 \lt a_0 \lt 1[/math]. For [math]a \gt a_0[/math] we get [math]f(a) \lt 1[/math] and for [math]a \lt a_0[/math] we get [math]f(a) \gt 1[/math], so [math]a_0[/math] is unique.

Substituting [math]a=\epsilon\sqrt{d-1}[/math] shows that the factor in Theorem is strictly positive if and only if [math]\epsilon \gt a_0/\sqrt{d-1}[/math] holds with some [math]a_0\in(1,2)[/math], compare Problem. That is, as bigger as we choose the dimension, the smaller we can make [math]\epsilon \gt 0[/math]. \smallskip We however cannot let [math]\epsilon[/math] tend to zero for a fixed dimension [math]d[/math]. The interpretation of Theorem, i.e., that [math]1-\frac{2}{\epsilon\sqrt{d-1}}\exp({-\frac{\epsilon^2(d-1)}{2}})[/math] fraction of the volume of the [math]d[/math]-dimensional unit ball is located [math]\epsilon[/math]-close to the equator, is only valid if [math]\epsilon[/math] is not too small. Although this might right now be fairly obvious, we point out that in contrast to the ‘typical epsilontics’, [math]\epsilon \gt 0[/math] cannot be thought of being arbitrarily small, but what we can choose for it depends on the space's dimension to be large enough. \smallskip For later use we note the following reformulation of Theorem.

Let [math]d\geqslant3[/math] and [math]\epsilon \gt 0[/math]. Then we have

In the notation of Theorem we have

Now we will use the volume estimates from above in order to establish where points that we draw at random from the [math]d[/math]-dimensional unit ball will be located and what mutual angles between the points we can expect. To make our proofs formal, we will employ in the rest of the chapter a probability space [math](\Omega,\Sigma,\P)[/math] and random variables [math]X\colon\Omega\rightarrow\mathbb{R}^d[/math] with distribution [math]X\sim\mathcal{U}(\Bbar_1(0))[/math], compare Definition.

Let [math]d\geqslant3[/math] and assume that we draw [math]n\geqslant2[/math] points [math]x^{\scriptscriptstyle(1)},\dots,x^{\scriptscriptstyle(n)}[/math] at random from the [math]d[/math]-dimensional unit ball. Then

In view of the Surface Concentration Theorem, most of the unit ball's volume is located close to its surface and thus a point chosen uniformly at random is likely to be close to the surface. For a formal proof, we put [math]\epsilon=\frac{2\ln n}{d}[/math] and consider a random vector [math]X\sim\mathcal{U}(\Bbar_1(0))[/math]. For fixed [math]1\leqslant j\leqslant n[/math] we employ Theorem to get

Although we get a trivial estimate if we sub [math]n=1[/math] into the Theorem, its proof showed that

holds, so if [math]d[/math] is large enough and [math]\epsilon \gt 0[/math] is not too small, a single point chosen at random will be [math]\epsilon[/math]--close to the surface of [math]\Bbar_1(0)[/math] with a high probability. We continue by studying angles between points.

Let [math]d\geqslant3[/math] and assume that we draw [math]n\geqslant2[/math] points [math]x^{\scriptscriptstyle(1)},\dots,x^{\scriptscriptstyle(n)}[/math] uniformly at random from the [math]d[/math]-dimensional unit ball. Then

{{{4}}}

\end{picture}\nopagebreak[4] \begin{fig} We apply he Waist Concentration Theorem\\with respect to the new north pole.\end{fig} \end{center}

To make the above idea formal, we put [math]\epsilon=\sqrt{6\ln n}/\sqrt{d-1}[/math] and consider two random variables [math]X,Y\colon\Omega\rightarrow\mathbb{R}^d[/math] with [math]X,Y\sim\mathcal{U}(\Bbar_1(0))[/math]. Then we can find for each [math]\omega_1\in\Omega[/math] with [math]X(\omega_1)\not=0[/math] an orthogonal linear map [math]T(\omega)\colon\mathbb{R}^d\rightarrow\mathbb{R}^d[/math] with [math]T(\omega_1)X(\omega_1)=(\|X(\omega_1)\|,0,\dots,0)[/math]. Indeed, we can put [math]f^{\scriptscriptstyle(1)}=X(\omega)/\|X(\omega)\|[/math] and extend to an orthonormal basis [math]\mathcal{F}=\{ f^{\scriptscriptstyle(1)}, f^{\scriptscriptstyle(2)},\dots,f^{\scriptscriptstyle(d)}\}[/math] by first applying the basis extension theorem and then the Gram-Schmidt procedure. The map [math]T(\omega_1)[/math] is then defined as the linear extension of [math]T(\omega_1)f^{\scriptscriptstyle(j)}:=e^{\scriptscriptstyle(j)}[/math] where the [math]e^{\scriptscriptstyle(j)}[/math] are the standard unit vectors. We obtain [math]T(\omega_1)X(\omega_1)=T(\omega_1)(\|X(\omega_1)\|f^{\scriptscriptstyle(1)})=\|X(\omega_1)\|e^{\scriptscriptstyle(1)}[/math] and thus

for [math](\omega_1,\omega_2)\in\Omega\times\Omega[/math] with [math]X(\omega_1)\not=0[/math]. We observe that [math]T(\omega_1)Y\sim\mathcal{U}(\Bbar_1(0))[/math] holds for each fixed [math]\omega_1[/math] and that [math]\langle{}e^{\scriptscriptstyle(1)},T(\omega_1)Y(\omega_2)\rangle{}[/math] is the first coordinate of [math]T(\omega_1)Y(\omega_2)[/math]. Therefore we can apply Corollary to compute

where the last estimate holds as [math]1/\sqrt{6\ln n}\leqslant1[/math] is valid for [math]n\geqslant2[/math]. After observing that in the first line below necessarily [math]X(\omega_1)\not=0[/math] holds and by using [math]\|X(\omega_1)\|\leqslant 1[/math], we get

where we employed Cavalieri's Principle and the estimates from above. Let finally, random vectors [math]X^{\scriptscriptstyle(1)},\dots,X^{\scriptscriptstyle(n)}\sim\mathcal{U}(\Bbar_1(0))[/math] be given. Then

as desired.}} If we apply the estimate of Theorem for only two points [math]x[/math], [math]y\in\Bbar_1(0)[/math] we get only a probability of greater or equal to [math]1/2[/math] for [math]\langle{}x,y\rangle{}[/math] being smaller than [math]\sqrt{6\ln 2}/\sqrt{d-1}[/math]. Going through the proof of of the theorem yields however that

from where we can see that for fixed [math]\epsilon \gt 0[/math] and [math]d[/math] large enough the probability for the scalar product to be [math]\epsilon[/math]--small will be close to one which means that two points picked at random from the high dimensional unit ball will be almost orthogonal with high probability.

\smallskip We remark here, that when interpreting the scalar product as a measure for how close to orthogonal two vectors are, one usually does normalize the vectors first. In the above result we did not do so, since, by Theorem we know that the points considered in Theorem will have norm close to one with high probability, anyway. We leave a formal proof as Problem.

\section*{Problems}

\smallskip

General references

Wegner, Sven-Ake (2024). "Lecture Notes on High-Dimensional Data". arXiv:2101.05841 [math.FA].